a review of Yasha Levine, Surveillance Valley: The Secret Military History of the Internet (PublicAffairs, 2018)

by Zachary Loeb

~

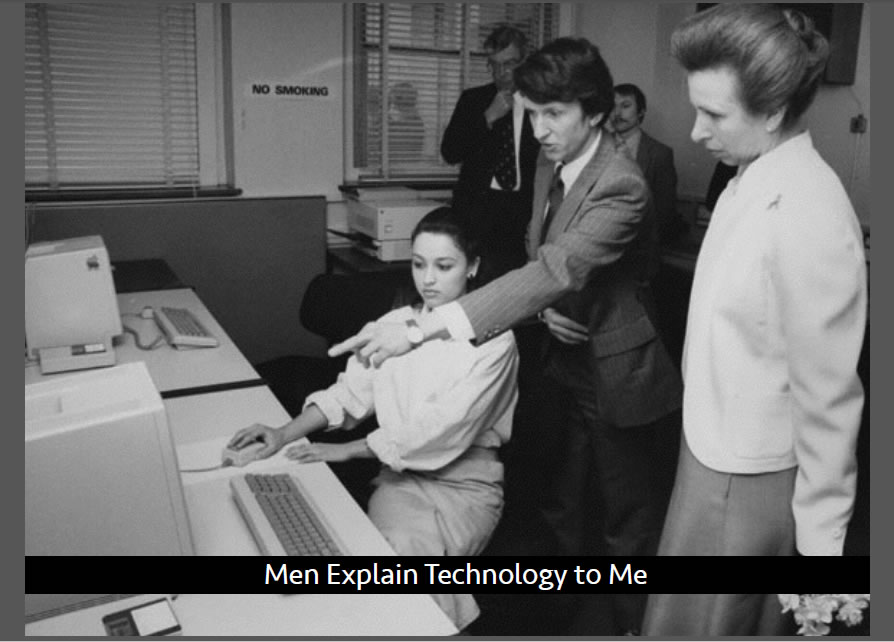

There is something rather precious about Google employees, and Internet users, who earnestly believe the “don’t be evil” line. Though those three words have often been taken to represent a sort of ethos, their primary function is as a steam vent – providing a useful way to allow building pressure to escape before it can become explosive. While “don’t be evil” is associated with Google, most of the giants of Silicon Valley have their own variations of this comforting ideological façade: Apple’s “think different,” Facebook’s talk of “connecting the world,” the smiles on the side of Amazon boxes. And when a revelation troubles this carefully constructed exterior – when it turns out Google is involved in building military drones, when it turns out that Amazon is making facial recognition software for the police – people react in shock and outrage. How could this company do this?!?

What these revelations challenge is not simply the mythos surrounding particular tech companies, but the mythos surrounding the tech industry itself. After all, many people have their hopes invested in the belief that these companies are building a better brighter future, and they are naturally taken aback when they are forced to reckon with stories that reveal how these companies are building the types of high-tech dystopias that science fiction has been warning us about for decades. And in this space there are some who seem eager to allow a new myth to take root: one in which the unsettling connections between big tech firms and the military industrial complex is something new. But as Yasha Levine’s important new book, Surveillance Valley, deftly demonstrates the history of the big tech firms, complete with its panoptic overtones, is thoroughly interwoven with the history of the repressive state apparatus. While many people may be at least nominally aware of the links between early computing, or the proto-Internet, and the military, Levine’s book reveals the depth of these connections and how they persist. As he provocatively puts it, “the Internet was developed as a weapon and remains a weapon today” (9).

Thus, cases of Google building military drones, Facebook watching us all, and Amazon making facial recognition software for the police, need to be understood not as aberrations. Rather, they are business as usual.

Levine begins his account with the war in Vietnam, and the origins of a part of the Department of Defense known as the Advanced Research Projects Agency (ARPA) – an outfit born of the belief that victory required the US to fight a high-tech war. ARPA’s technocrats earnestly believed “in the power of science and technology to solve the world’s problems” (23), and they were confident that the high-tech systems they developed and deployed (such as Project Igloo White) would allow the US to triumph in Vietnam. And though the US was not ultimately victorious in that conflict, the worldview of ARPA’s technocrats was, as was the linkage between the nascent tech sector and the military. Indeed, the tactics and techniques developed in Vietnam were soon to be deployed for dealing with domestic issues, “giving a modern scientific veneer to public policies that reinforced racism and structural poverty” (30).

Much of the early history of computers, as Levine documents, is rooted in systems developed to meet military and intelligence needs during WWII – but the Cold War provided plenty of impetus for further military reliance on increasingly complex computing systems. And as fears of nuclear war took hold, computer systems (such as SAGE) were developed to surveil the nation and provide military officials with a steady flow of information. Along with the advancements in computing came the dispersion of cybernetic thinking which treated humans as information processing machines, not unlike computers, and helped advance a worldview wherein, given enough data, computers could make sense of the world. All that was needed was to feed more, and more, information into the computers – and intelligence agencies proved to be among the first groups interested in taking advantage of these systems.

While the development of these systems of control and surveillance ran alongside attempts to market computers to commercial firms, Levine’s point is that it was not an either/or situation but a both/and, “computer technology is always ‘dual use,’ to be used in both commercial and military applications” (58) – and this split allows computer scientists and engineers who would be morally troubled by the “military applications” of their work to tell themselves that they work strictly on the commercial, or scientific side. ARPANET, the famous forerunner of the Internet, was developed to connect computer centers at a variety of prominent universities. Reliant on Interface Message Processors (IMPs) the system routed messages through the network through a variety of nodes and in the case that one node went down the system would reroute the message through other nodes – it was a system of relaying information built to withstand a nuclear war.

Though all manner of utopian myths surround the early Internet, and by extension its forerunner, Levine highlights that “surveillance was baked in from the very beginning” (75). Case in point, the largely forgotten CONUS Intel program that gathered information on millions of Americans. By encoding this information on IBM punch cards, which were then fed into a computer, law enforcement groups and the army were able to access information not only regarding criminal activity, but activities protected by the first amendment. As news of these databases reached the public they generated fears of a high-tech surveillance society, leading some Senators, such as Sam Ervin, to push back against the program. And in a foreshadowing of further things to come, “the army promised to destroy the surveillance files, but the Senate could not obtain definitive proof that the files were ever fully expunged,” (87). Though there were concerns about the surveillance potential of ARPANET, its growing power was hardly checked, and more government agencies began building their own subnetworks (PRNET, SATNET). Yet, as they relied on different protocols, these networks could not connect to each other, until TCP/IP “the same basic network language that powers the Internet today” (95), allowed them to do so.

Yet surveillance of citizens, and public pushback against computerized control, is not the grand origin story that most people are familiar with when it comes to the Internet. Instead the story that gets told is one whereby a military technology is filtered through the sieve of a very selective segment of the 1960s counterculture to allow it to emerge with some rebellious credibility. This view, owing much to Stewart Brand, transformed the nascent Internet from a military technology into a technology for everybody “that just happened to be run by the Pentagon” (106). Brand played a prominent and public role in rebranding the computer, as well as those working on the computers – turning these cold calculating machines into doors to utopia, and portraying computer programmers and entrepreneurs as the real heroes of the counterculture. In the process the military nature of these machines disappeared behind a tie-dyed shirt, and the fears of a surveillance society were displaced by hip promises of total freedom. The government links to the network were further hidden as ARPANET slowly morphed into the privatized commercial system we know as the Internet. It may seem mind boggling that the Internet was simply given away with “no real public debate, no discussion, no dissension, and no oversight” (121), but it is worth remembering that this was not the Internet we know. Rather it was how the myth of the Internet we know was built. A myth that combined, as was best demonstrated by Wired magazine, “an unquestioning belief in the ultimate goodness and rightness of markets and decentralized computer technology, no matter how it was used” (133).

The shift from ARPANET to the early Internet to the Internet of today presents a steadily unfolding tale wherein the result is that, today, “the Internet is like a giant, unseen blob that engulfs the modern world” (169). And in terms of this “engulfing” it is difficult to not think of a handful of giant tech companies (Amazon, Facebook, Apple, eBay, Google) who are responsible for much of that. In the present Internet atmosphere people have become largely inured to the almost clichéd canard that “if you’re not paying, you are the product,” but what this represents is how people have, largely, come to accept that the Internet is one big surveillance machine. Of course, feeding information to the giants made a sort of sense, many people (at least early on) seem to have been genuinely taken in by Google’s “Don’t Be Evil” image, and they saw themselves as the beneficiaries of the fact that “the more Google knew about someone, the better its search results would be” (150). The key insight that firms like Google seem to have understood is that a lot can be learned about a person based on what they do online (especially when they think no one is watching) – what people search for, what sites people visit, what people buy. And most importantly, what these companies understand is that “everything that people do online leaves a trail of data” (169), and controlling that data is power. These companies “know us intimately, even the things that we hide from those closest to us” (171). ARPANET found itself embroiled in a major scandal, at its time, when it was revealed how it was being used to gather information on and monitor regular people going about their lives – and it may well be that “in a lot of ways” the Internet “hasn’t changed much from its ARPANET days. It’s just gotten more powerful” (168).

But even as people have come to gradually accept, by their actions if not necessarily by their beliefs, that the Internet is one big surveillance machine – periodically events still puncture this complacency. Case in point: Edward Snowden’s revelations about the NSA which splashed the scale of Internet assisted surveillance across the front pages of the world’s newspapers. Reporting linked to the documents Snowden leaked revealed how “the NSA had turned Silicon Valley’s globe-spanning platforms into a de facto intelligence collection apparatus” (193), and these documents exposed “the symbiotic relationship between Silicon Valley and the US government” (194). And yet, in the ensuing brouhaha, Silicon Valley was largely able to paint itself as the victim. Levine attributes some of this to Snowden’s own libertarian political bent, as he became a cult hero amongst technophiles, cypher-punks, and Internet advocates, “he swept Silicon Valley’s role in Internet surveillance under the rug” (199), while advancing a libertarian belief in “the utopian promise of computer networks” (200) similar to that professed by Steward Brand. In many ways Snowden appeared as the perfect heir apparent to the early techno-libertarians, especially as he (like them) focused less on mass political action and instead more on doubling-down on the idea that salvation would come through technology. And Snowden’s technology of choice was Tor.

While Tor may project itself as a solution to surveillance, and be touted as such by many of its staunchest advocates, Levine casts doubt on this. Noting that, “Tor works only if people are dedicated to maintaining a strict anonymous Internet routine,” one consisting of dummy e-mail accounts and all transactions carried out in Bitcoin, Levine suggests that what Tor offers is “a false sense of privacy” (213). Levine describes the roots of Tor in an original need to provide government operatives with an ability to access the Internet, in the field, without revealing their true identities; and in order for Tor to be effective (and not simply signal that all of its users are spies and soldiers) the platform needed to expand its user base: “Tor was like a public square—the bigger and more diverse the group assembled there, the better spies could hide in the crowd” (227).

Though Tor had spun off as an independent non-profit, it remained reliant for much of its funding on the US government, a matter which Tor aimed to downplay through emphasizing its radical activist user base and by forming close working connections with organizations like WikiLeaks that often ran afoul of the US government. And in the figure of Snowden, Tor found a perfect public advocate, who seemed to be living proof of Tor’s power – after all, he had used it successfully. Yet, as the case of Ross Ulbricht (the “Dread Pirate Roberts” of Silk Road notoriety) demonstrated, Tor may not be as impervious as it seems – researchers at Carnegie Mellon University “had figured out a cheap and easy way to crack Tor’s super-secure network” (263). To further complicate matters Tor had come to be seen by the NSA “as a honeypot,” to the NSA “people with something to hide” were the ones using Tor and simply by using it they were “helping to mark themselves for further surveillance” (265). And much of the same story seems to be true for the encrypted messaging service Signal (it is government funded, and less secure than its fans like to believe). While these tools may be useful to highly technically literate individuals committed to maintaining constant anonymity, “for the average users, these tools provided a false sense of security and offered the opposite of privacy” (267).

The central myth of the Internet frames it as an anarchic utopia built by optimistic hippies hoping to save the world from intrusive governments through high-tech tools. Yet, as Surveillance Valley documents, “computer technology can’t be separated from the culture in which it is developed and used” (273). Surveillance is at the core of, and has always been at the core of, the Internet – whether the all-seeing eye be that of the government agency, or the corporation. And this is a problem that, alas, won’t be solved by crypto-fixes that present technological solutions to political problems. The libertarian ethos that undergirds the Internet works well for tech giants and cypher-punks, but a real alternative is not a set of tools that allow a small technically literate gaggle to play in the shadows, but a genuine democratization of the Internet.

*

Surveillance Valley is not interested in making friends.

It is an unsparing look at the origins of, and the current state of, the Internet. And it is a book that has little interest in helping to prop up the popular myths that sustain the utopian image of the Internet. It is a book that should be read by anyone who was outraged by the Facebook/Cambridge Analytica scandal, anyone who feels uncomfortable about Google building drones or Amazon building facial recognition software, and frankly by anyone who uses the Internet. At the very least, after reading Surveillance Valley many of those aforementioned situations seem far less surprising. While there are no shortage of books, many of them quite excellent, that argue that steps need to be taken to create “the Internet we want,” in Surveillance Valley Yasha Levine takes a step back and insists “first we need to really understand what the Internet really is.” And it is not as simple as merely saying “Google is bad.”

While much of the history that Levine unpacks won’t be new to historians of technology, or those well versed in critiques of technology, Surveillance Valley brings many, often separate strands into one narrative. Too often the early history of computing and the Internet is placed in one silo, while the rise of the tech giants is placed in another – by bringing them together, Levine is able to show the continuities and allow them to be understood more fully. What is particularly noteworthy in Levine’s account is his emphasis on early pushback to ARPANET, an often forgotten series of occurrences that certainly deserves a book of its own. Levine describes students in the 1960s who saw in early ARPANET projects “a networked system of surveillance, political control, and military conquest being quietly assembled by diligent researchers and engineers at college campuses around the country,” and as Levine provocatively adds, “the college kids had a point” (64). Similarly, Levine highlights NBC reporting from 1975 on the CIA and NSA spying on Americans by utilizing ARPANET, and on the efforts of Senators to rein in these projects. Though Levine is not presenting, nor is he claiming to present, a comprehensive history of pushback and resistance, his account makes it clear that liberatory claims regarding technology were often met with skepticism. And much of that skepticism proved to be highly prescient.

Yet this history of resistance has largely been forgotten amidst the clever contortions that shifted the Internet’s origins, in the public imagination, from counterinsurgency in Vietnam to the counterculture in California. Though the area of Surveillance Valley that will likely cause the most contention is Levine’s chapters on crypto-tools like Tor and Signal, perhaps his greatest heresy is in his refusal to pay homage to the early tech-evangels like Stewart Brand and Kevin Kelly. While the likes of Brand, and John Perry Barlow, are often celebrated as visionaries whose utopian blueprints have been warped by power-hungry tech firms, Levine is frank in framing such figures as long-haired libertarians who knew how to spin a compelling story in such a way that made empowering massive corporations seem like a radical act. And this is in keeping with one of the major themes that runs, often subtlety, through Surveillance Valley: the substitution of technology for politics. Thus, in his book, Levine does not only frame the Internet as disempowering insofar as it runs on surveillance and relies on massive corporations, but he emphasizes how the ideological core of the Internet focuses all political action on technology. To every social, economic, and political problem the Internet presents itself as the solution – but Levine is unwilling to go along with that idea.

Those who were familiar with Levine’s journalism before he penned Surveillance Valley will know that much of his reporting has covered crypto-tech, like Tor, and similar privacy technologies. Indeed, to a certain respect, Surveillance Valley can be read as an outgrowth of that reporting. And it is also important to note, as Levine does in the book, that Levine did not make himself many friends in the crypto community by taking on Tor. It is doubtful that cypherpunks will like Surveillance Valley, but it is just as doubtful that they will bother to actually read it and engage with Levine’s argument or the history he lays out. This is a shame, for it would be a mistake to frame Levine’s book as an attack on Tor (or on those who work on the project). Levine’s comments on Tor are in keeping with the thrust of the larger argument of his book: such privacy tools are high-tech solutions to problems created by high-tech society, that mainly serve to keep people hooked into all those high-tech systems. And he questions the politics of Tor, noting that “Silicon Valley fears a political solution to privacy. Internet Freedom and crypto offer an acceptable solution” (268). Or, to put it another way, Tor is kind of like shopping at Whole Foods – people who are concerned about their food are willing to pay a bit more to get their food there, but in the end shopping there lets people feel good about what they’re doing without genuinely challenging the broader system. And, of course, now Whole Foods is owned by Amazon. The most important element of Levine’s critique of Tor is not that it doesn’t work, for some (like Snowden) it clearly does, but that most users do not know how to use it properly (and are unwilling to lead a genuinely full-crypto lifestyle) and so it fails to offer more than a false sense of security.

Thus, to say it again, Surveillance Valley isn’t particularly interested in making a lot of friends. With one hand it brushes away the comforting myths about the Internet, and with the other it pushes away the tools that are often touted as the solution to many of the Internet’s problems. And in so doing Levine takes on a variety of technoculture’s sainted figures like Stewart Brand, Edward Snowden, and even organizations like the EFF. While Levine clearly doesn’t seem interested in creating new myths, or propping up new heroes, it seems as though he somewhat misses an opportunity here. Levine shows how some groups and individuals had warned about the Internet back when it was still ARPANET, and a greater emphasis on such people could have helped create a better sense of alternatives and paths that were not taken. Levine notes near the book’s end that, “we live in bleak times, and the Internet is a reflection of them: run by spies and powerful corporations just as our society is run by them. But it isn’t all hopeless” (274). Yet it would be easier to believe the “isn’t all hopeless” sentiment, had the book provided more analysis of successful instances of pushback. While it is respectable that Levine puts forward democratic (small d) action as the needed response, this comes as the solution at the end of a lengthy work that has discussed how the Internet has largely eroded democracy. What Levine’s book points to is that it isn’t enough to just talk about democracy, one needs to recognize that some technologies are democratic while others are not. And though we are loathe to admit it, perhaps the Internet (and computers) simply are not democratic technologies. Sure, we may be able to use them for democratic purposes, but that does not make the technologies themselves democratic.

Surveillance Valley is a troubling book, but it is an important book. It smashes comforting myths and refuses to leave its readers with simple solutions. What it demonstrates in stark relief is that surveillance and unnerving links to the military-industrial complex are not signs that the Internet has gone awry, but signs that the Internet is functioning as intended.

_____

Zachary Loeb is a writer, activist, librarian, and terrible accordion player. He earned his MSIS from the University of Texas at Austin, an MA from the Media, Culture, and Communications department at NYU, and is currently working towards a PhD in the History and Sociology of Science department at the University of Pennsylvania. His research areas include media refusal and resistance to technology, ideologies that develop in response to technological change, and the ways in which technology factors into ethical philosophy – particularly in regards of the way in which Jewish philosophers have written about ethics and technology. Using the moniker “The Luddbrarian,” Loeb writes at the blog Librarian Shipwreck, and is a frequent contributor to The b2 Review Digital Studies section.

a review of Jussi Parikka, A Geology of Media (University of Minnesota Press, 2015) and The Anthrobscene (University of Minnesota Press, 2015)

a review of Jussi Parikka, A Geology of Media (University of Minnesota Press, 2015) and The Anthrobscene (University of Minnesota Press, 2015)