a review of Charlie Brooker, writer & producer, Black Mirror (BBC/Zeppotron, 2011- )

a review of Charlie Brooker, writer & producer, Black Mirror (BBC/Zeppotron, 2011- )

by Zachary Loeb

~

Depending upon which sections of the newspaper one reads, it is very easy to come away with two rather conflicting views of the future. If one begins the day by reading the headlines in the “International News” or “Environment” it is easy to feel overwhelmed by a sense of anxiety and impending doom; however, if one instead reads the sections devoted to “Business” or “Technology” it is easy to feel confident that there are brighter days ahead. We are promised that soon we shall live in wondrous “Smart” homes where all of our devices work together tirelessly to ensure our every need is met even while drones deliver our every desire even as we enjoy ever more immersive entertainment experiences with all of this providing plenty of wondrous investment opportunities…unless of course another economic collapse or climate change should spoil these fantasies. Though the juxtaposition between newspaper sections can be jarring an element of anxiety can generally be detected from one section to the next – even within the “technology” pages. After all, our devices may have filled our hours with apps and social networking sites, but this does not necessarily mean that they have left us more fulfilled. We have been supplied with all manner of answers, but this does not necessarily mean we had first asked any questions.

[youtube https://www.youtube.com/watch?v=pimqGkBT6Ek&w=560&h=315]

If you could remember everything, would you want to? If a cartoon bear lampooned the pointlessness of elections, would you vote for the bear? Would you participate in psychological torture, if the person being tortured was a criminal? What lengths would you turn to if you could not move-on from a loved one’s death? These are the types of questions posed by the British television program Black Mirror, wherein anxiety about the technologically riddled future, be it the far future or next week, is the core concern. The paranoid pessimism of this science-fiction anthology program is not a result of a fear of the other or of panic at the prospect of nuclear annihilation – but is instead shaped by nervousness at the way we have become strangers to ourselves. There are no alien invaders, occult phenomena, nor is there a suit wearing narrator who makes sure that the viewers understand the moral of each story. Instead what Black Mirror presents is dread – it holds up a “black mirror” (think of any electronic device when the power on the screen is off) to society and refuses to flinch at the reflection.

Granted, this does not mean that those viewing the program will not flinch.

[And Now A Brief Digression]

Before this analysis goes any further it seems worthwhile to pause and make a few things clear. Firstly, and perhaps most importantly, the intention here is not to pass a definitive judgment on the quality of Black Mirror. While there are certainly arguments that can be made regarding how “this episode was better than that one” – this is not the concern here. Nor for that matter is the goal to scoff derisively at Black Mirror and simply dismiss of it – the episodes are well written, interestingly directed, and strongly acted. Indeed, that the program can lead to discussion and introspection is perhaps the highest praise that one can bestow upon a piece of widely disseminated popular culture. Secondly, and perhaps even more importantly (depending on your opinion), some of the episodes of Black Mirror rely upon twists and surprises in order to have their full impact upon the viewer. Oftentimes people find it highly frustrating to have these moments revealed to them ahead of time, and thus – in the name of fairness – let this serve as an official “spoiler warning.” The plots of each episode will not be discussed in minute detail in what follows – as the intent here is to consider broader themes and problems – but if you hate “spoilers” you should consider yourself warned.

[Digression Ends]

The problem posed by Black Mirror is that in building nervous narratives about the technological tomorrow the program winds up replicating many of the shortcomings of contemporary discussions around technology. Shortcomings that make such an unpleasant future seem all the more plausible. While Black Mirror may resist the obvious morality plays of a show like The Twilight Zone, the moral of the episodes may be far less oppositional than they at first seem. The program draws much of its emotional heft by narrowly focusing its stories upon specific individuals, but in so doing the show may function as a sort of precognitive “usage manual,” one that advises “if a day should arrive when you can technologically remember everything…don’t be like the guy in this episode.” The episodes of Black Mirror may call upon viewers to look askance at the future it portrays, but it also encourages the sort of droll inured acceptance that is characteristic of the people in each episode of the program. Black Mirror is a sleek, hip, piece of entertainment, another installment in the contemporary “golden age of television” wherein it risks becoming just another program that can be streamed onto any of a person’s black mirror like screens. The program is itself very much a part of the same culture industry of the YouTube and Twitter era that the show seems to vilify – it is ready made for “binge watching.” The program may be disturbing, but its indictments are soft – allowing viewers a distance that permits them to say aloud “I would never do that” even as they are subconsciously unsure.

Thus, Black Mirror appears as a sort of tragic confirmation of the continuing validity of Jacques Ellul’s comment:

“One cannot but marvel at an organization which provides the antidote as it distills the poison.” (Ellul, 378)

For the tales that are spun out in horrifying (or at least discomforting) detail on Black Mirror may appear to be a salve for contemporary society’s technological trajectory – but the show is also a ready made product for the very age that it is critiquing. A salve that does not solve anything, a cultural shock absorber that allows viewers to endure the next wave of shocks. It is a program that demands viewers break away from their attachment to their black mirrors even as it encourages them to watch another episode of Black Mirror. This is not to claim that the show lacks value as a critique; however, the show is less a radical indictment than some may be tempted to give it credit for being. The discomfort people experience while watching the show easily becomes a masochistic penance that allows people to continue walking down the path to the futures outlined in the show. Black Mirror provides the antidote, but it also distills the poison.

That, however, may be the point.

[Interrogation 1: Who Bears Responsibility?]

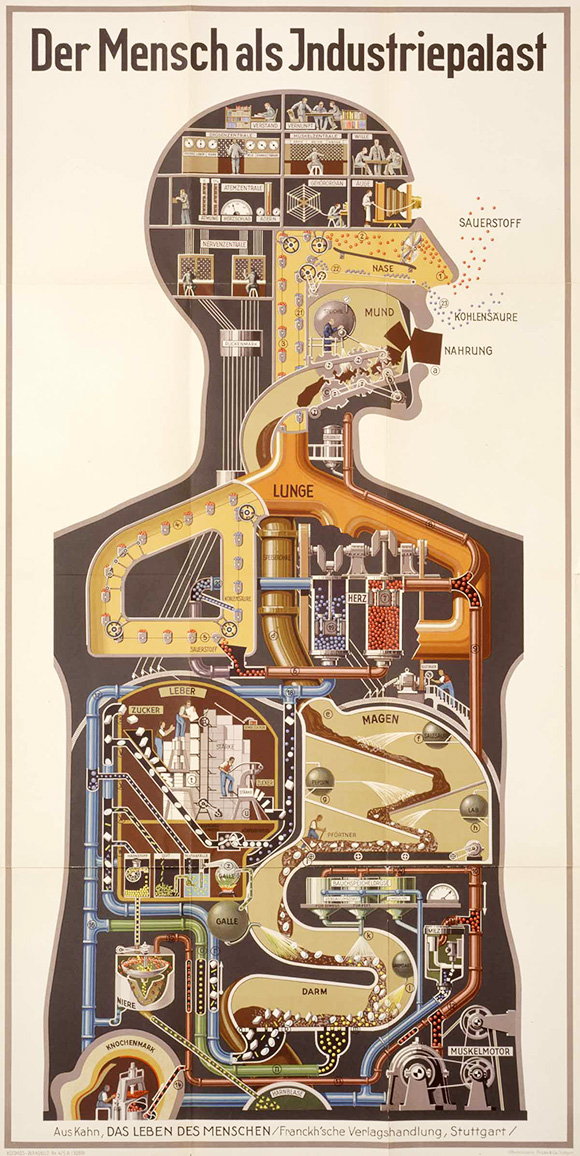

Technology is, of course, everywhere in Black Mirror – in many episodes it as much of a character as the humans who are trying to come to terms with what the particular device means. In some episodes (“The National Anthem” or “The Waldo Moment”) the technologies that feature prominently are those that would be quite familiar to contemporary viewers: social media platforms like YouTube, Twitter, Facebook and the like. Whilst in other episodes (“The Complete History of You,” “White Bear” and “Be Right Back”) the technologies on display are new and different: an implantable device that records (and can play back) all of one’s memories, something that can induce temporary amnesia, a company that has developed a being that is an impressive mix of robotics and cloning. The stories that are told in Black Mirror, as was mentioned earlier, focus largely on the tales of individuals – “Be Right Back” is primarily about one person’s grief – and though this is a powerful story-telling device (and lest there be any confusion – many of these are very powerfully told stories) one of the questions that lingers unanswered in the background of many of these episodes is: who is behind these technologies?

In fairness, Black Mirror would likely lose some of its effectiveness in terms of impact if it were to delve deeply into this question. If “The Complete History of You” provided a sci-fi faux-documentary foray into the company that had produced the memory recording “grains” it would probably not have felt as disturbing as the tale of abuse, sex, violence and obsession that the episode actually presents. Similarly, the piece of science-fiction grade technology upon which “White Bear” relies, functions well in the episode precisely because the key device makes only a rather brief appearance. And yet here an interesting contrast emerges between the episodes set in, or closely around, the present and those that are set further down the timeline – for in the episodes that rely on platforms like YouTube, the viewer technically knows who the interests are behind the various platforms. The episode “The Complete History of You” may be intensely disturbing, but what company was it that developed and brought the “grains” to market? What biotechnology firm supplies the grieving spouse in “Be Right Back” with the robotic/clone of her deceased husband? Who gathers the information from these devices? Where does that information live? Who is profiting? These are important questions that go unanswered, largely because they go unasked.

Of course, it can be simple to disregard these questions. Dwelling upon them certainly does take something away from the individual episodes and such focus diminishes the entertainment quality of Black Mirror. This is fundamentally why it is so essential to insist that these critical questions be asked. The worlds depicted in episodes of Black Mirror did not “just happen” but are instead a result of layers upon layers of decisions and choices that have wound up shaping these characters lives – and it is questionable how much say any of these characters had in these decisions. This is shown in stark relief in “The National Anthem” in which a befuddled prime minister cannot come to grips with the way that a threat uploaded to YouTube along with shifts in public opinion, as reflected on Twitter, has come to require him to commit a grotesque act; his despair at what he is being compelled to do is a reflection of the new world of politics created by social media. In some ways it is tempting to treat episodes like “The Complete History of You” and “Be Right Back” as retorts to an unflagging adoration for “innovation,” “disruption,” and “permissionless innovation” – for the episodes can be read as a warning that just because we can record and remember everything, does not necessarily mean that we should. And yet the presence of such a cultural warning does not mean that such devices will not eventually be brought to market. The denizens of the worlds of Black Mirror are depicted as being at the mercy of the technological current.

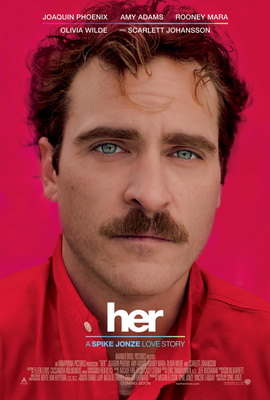

Thus, and here is where the problem truly emerges, the episodes can be treated as simple warnings that state “well, don’t be like this person.” After all, the world of “The Complete History of You” seems to be filled with people who – unlike the obsessive main character – can use the “grain” productively; on a similar note it can be easy to imagine many people pointing to “Be Right Back” and saying that the idea of a robotic/clone could be wonderful – just don’t use it to replicate the recently dead; and of course any criticism of social media in “The Waldo Moment” or “The National Anthem” can be met with a retort regarding a blossoming of free expression and the ways in which such platforms can help bolster new protest movements. And yet, similar to the sad protagonist in the film Her, the characters in the story lines of Black Mirror rarely appear as active agents in relation to technology even when they are depicted as truly “choosing” a given device. Rather they have simply been reduced to consumers – whether they are consumers of social media, political campaigns, or an amusement park where the “show” is a person being psychologically tortured day after day.

This is not to claim that there should be an Apple or Google logo prominently displayed on the “grain” or on the side of the stationary bikes in “Fifteen Million Merits,” nor is it to argue that the people behind these devices should be depicted as cackling corporate monsters – but it would be helpful to have at least some image of the people behind these devices. After all, there are people behind these devices. What were they thinking? Were they not aware of these potential risks? Did they not care? Who bears responsibility? In focusing on the small scale human stories Black Mirror ignores the fact that there is another all too human story behind all of these technologies. Thus what the program riskily replicates is a sort of technological determinism that seems to have nestled itself into the way that people talk about technology these days – a sentiment in which people have no choice but to accept (and buy) what technology firms are selling them. It is not so much, to borrow a line from Star Trek, that “resistance is futile” as that nobody seems to have even considered resistance to be an option in the first place. Granted, we have seen in the not too distant past that such a sentiment is simply not true – Google Glass was once presented as inevitable but public push-back helped lead to Google (at least temporarily) shelving the device. Alas, one of the most effective ways of convincing people that they are powerless to resist is by bludgeoning them with cultural products that tell them they are powerless to resist. Or better yet, convince them that they will actually like being “assimilated.”

Therefore, the key thing to mull over after watching an episode of Black Mirror is not what is presented in the episode but what has been left out. Viewers need to ask the questions the show does not present: who is behind these technologies? What decisions have led to the societal acceptance of these technologies? Did anybody offer resistance to these new technologies? The “6 Questions to Ask of New Technology” posed by media theorist Neil Postman may be of use for these purposes, as might some of the questions posed in Riddled With Questions. The emphasis here is to point out that a danger of Black Mirror is that the viewer winds up being just like one of the characters : a person who simply accepts the technologically wrought world in which they are living without questioning those responsible and without thinking that opposition is possible.

[Interrogation 2: Utopia Unhinged is not a Dystopia]

“Dystopia” is a term that has become a fairly prominent feature in popular entertainment today. Bookshelves are filled with tales of doomed futures and many of these titles (particularly those aimed at the “young adult” audience) have a tendency to eventually reach the screens of the cinema. Of course, apocalyptic visions of the future are not limited to the big screen – as numerous television programs attest. For many, it is tempting to use terms such as “dystopia” when discussing the futures portrayed in Black Mirror and yet the usage of such a term seems rather misleading. True, at least one episode (“Fifteen Million Merits”) is clearly meant to evoke a dystopian far future, but to use that term in relation to many of the other installments seems a bit hyperbolic. After all, “The Waldo Moment” could be set tomorrow and frankly “The National Anthem” could have been set yesterday. To say that Black Mirror is a dystopian show risks taking an overly simplistic stance towards technology in the present as well as towards technology in the future – if the claim is that the show is thoroughly dystopian than how does one account for the episodes that may as well be set in the present? One can argue that the state of the present world is far less than ideal, one can cast a withering gaze in the direction of social media, one can truly believe that the current trajectory (if not altered) will lead in a negative direction…and yet one can believe all of these things and still resist the urge to label contemporary society a dystopia. Doom saying can be an enjoyably nihilistic way to pass an afternoon, but it makes for a rather poor critique.

It may be that what Black Mirror shows is how a dystopia can actually be a private hell instead of a societal one (which would certainly seem true of “White Bear” or “The Complete History of You”), or perhaps what Black Mirror indicates is that a derailed utopia is not automatically a dystopia. Granted, a major criticism of Black Mirror could emphasize that the show has a decidedly “industrialized world/Western world” focus – we do not see the factories where “grains” are manufactured and the varieties of new smart phones seen in the program suggest that the e-waste must be piling up somewhere. In other words – the derailed utopia of some could still be an outright dystopia for countless others. That the characters in Black Mirror do not seem particularly concerned with who assembled their devices is, alas, a feature all too characteristic of technology users today. Nevertheless, to restate the problem, the issue is not so much the threat of dystopia as it is the continued failure of humanity to use its impressive technological ingenuity to bring about a utopia (or even something “better” than the present). In some ways this provides an echo of Lewis Mumford’s comment, in The Story of Utopias, that:

“it would be so easy, this business of making over the world if it were only a matter of creating machinery.” (Mumford, 175)

True, the worlds of Black Mirror, including the ones depicting the world of today, show that “creating machinery” actually is an easy way “of making over the world” – however this does not automatically push things in the utopian direction for which Mumford was pining. Instead what is on display is another installment of the deferred potential of technology.

The term “another” is not used incidentally here, but is specifically meant to point to the fact that it is nothing new for people to see technology as a source for hope…and then to woefully recognize the way in which such hopes have been dashed time and again. Such a sentiment is visible in much of Walter Benjamin’s writing about technology – writing, as he was, after the mechanized destruction of WWI and on the eve of the technologically enhanced barbarity of WWII. In Benjamin’s essay “Eduard Fuchs, Collector and Historian ” he criticizes a strain in positivist/social democratic thinking that had emphasized that technological developments would automatically usher in a more just world, when in fact such attitudes woefully failed to appreciate the scale of the dangers. This leads Benjamin to note:

“A prognosis was due, but failed to materialize. That failure sealed a process characteristic of the past century: the bungled reception of technology. The process has consisted of a series of energetic, constantly renewed efforts, all attempting to overcome the fact that technology serves this society only by producing commodities.” (Benjamin, 266)

The century about which Benjamin was writing was not the twenty-first century, and yet these comments about “the bungled reception of technology” and technology which “serves this society only be producing commodities” seems a rather accurate description of the worlds depicted by Black Mirror. And yes, that certainly includes the episodes that are closer to our own day. The point of pulling out this tension; however, is to emphasize not the dystopian element of Black Mirror but to point to the “bungled reception” that is so clearly on display in the program – and by extension in the present day.

What Black Mirror shows in episode after episode (even in the clearly dystopian one) is the gloomy juxtaposition between what humanity can possibly achieve and what it actually achieves. The tools that could widen democratic participation can be used to allow a cartoon bear to run as a stunt candidate, the devices that allow us to remember the past can ruin the present by keeping us constantly replaying our memories yesterday, the things that can allow us to connect can make it so that we are unable to ever let go – “energetic, constantly renewed efforts” that all wind up simply “producing commodities.” Indeed, in a tragic-comic turn, Black Mirror demonstrates that amongst the commodities we continue to produce are those that elevate the “bungled reception of technology” to the level of a widely watched and critically lauded television serial.

The future depicted by Black Mirror may be startling, disheartening and quite depressing, but (except in the cases where the content is explicitly dystopian) it is worth bearing in mind that there is an important difference between dystopia and a world of people living amidst the continued “bungled reception of technology.” Are the people in “The National Anthem” paving the way for “White Bear” and in turn setting the stage for “Fifteen Million Merits?” It is quite possible. But this does not mean that the “reception of technology” must always be “bungled” – though changing our reception of it may require altering our attitude towards it. Here Black Mirror repeats its problematic thrust, for it does not highlight resistance but emphasizes the very attitudes that have “bungled” the reception and which continue to bungle the reception. Though “Fifteen Million Merits” does feature a character engaging in a brave act of rebellion, this act is immediately used to strengthen the very forces against which the character is rebelling – and thus the episode repeats the refrain “don’t bother resisting, it’s too late anyways.” This is not to suggest that one should focus all one’s hopes upon a farfetched utopian notion, or put faith in a sense of “hope” that is not linked to reality, nor does it mean that one should don sackcloth and begin mourning. Dystopias are cheap these days, but so are the fake utopian dreams that promise a world in which somehow technology will solve all of our problems. And yet, it is worth bearing in mind another comment from Mumford regarding the possibility of utopia:

“we cannot ignore our utopias. They exist in the same way that north and south exist; if we are not familiar with their classical statements we at least know them as they spring to life each day in our minds. We can never reach the points of the compass; and so no doubt we shall never live in utopia; but without the magnetic needle we should not be able to travel intelligently at all.” (Mumford, 28/29)

Black Mirror provides a stark portrait of the fake utopian lure that can lead us to the world to which we do not want to go – a world in which the “bungled reception of technology” continues to rule – but in staring horror struck at where we do not want to go we should not forget to ask where it is that we do want to go. The worlds of Black Mirror are steps in the wrong direction – so ask yourself: what would the steps in the right direction look like?

[Final Interrogation – Permission to Panic]

During “The Complete History of You” several characters enjoy a dinner party in which the topic of discussion eventually turns to the benefits and drawbacks of the memory recording “grains.” Many attitudes towards the “grains” are voiced – ranging from individuals who cannot imagine doing without the “grain” to a woman who has had hers violently removed and who has managed to adjust. While “The Complete History of You” focuses on an obsessed individual who cannot cope with a world in which everything can be remembered what the dinner party demonstrates is that the same world contains many people who can handle the “grains” just fine. The failed comedian who voices the cartoon bear in “The Waldo Moment” cannot understand why people are drawn to vote for the character he voices – but this does not stop many people from voting for the animated animal. Perhaps most disturbingly the woman at the center of “White Bear” cannot understand why she is followed by crowds filming her on their smart phones while she is hunted by masked assailants – but this does not stop those filming her from playing an active role in her torture. And so on…and so on…Black Mirror shows that in these horrific worlds, there are many people who are quite content with the new status quo. But that not everybody is despairing simply attests to Theodor Adorno and Max Horkheimer’s observation that:

“A happy life in a world of horror is ignominiously refuted by the mere existence of that world. The latter therefore becomes the essence, the former negligible.” (Adorno and Horkheimer, 93)

Black Mirror is a complex program, made all the more difficult to consider as the anthology character of the show makes each episode quite different in terms of the issues that it dwells upon. The attitudes towards technology and society that are subtly suggested in the various episodes are in line with the despairing aura that surrounds the various protagonists and antagonists of the episodes. Yet, insofar as Black Mirror advances an ethos it is one of inured acceptance – it is a satire that is both tragedy and comedy. The first episode of the program, “The National Anthem,” is an indictment of a society that cannot tear itself away from the horrors being depicted on screens in a television show that owes its success to keeping people transfixed to horrors being depicted on their screens. The show holds up a “black mirror” to society but what it shows is a world in which the tables are rigged and the audience has already lost – it is a magnificently troubling cultural product that attests to the way the culture industry can (to return to Ellul) provide the antidote even as it distills the poison. Or, to quote Adorno and Horkheimer again (swap out the word “filmgoers” with “tv viewers”):

“The permanently hopeless situations which grind down filmgoers in daily life are transformed by their reproduction, in some unknown way, into a promise that they may continue to exist. The one needs only to become aware of one’s nullity, to subscribe to one’s own defeat, and one is already a party to it. Society is made up of the desperate and thus falls prey to rackets.” (Adorno and Horkheimer, 123)

This is the danger of Black Mirror that it may accustom and inure its viewers to the ugly present it displays while preparing them to fall prey to the “bungled reception” of tomorrow – it inculcates the ethos of “one’s own defeat.” By showing worlds in which people are helpless to do anything much to challenge the technological society in which they have become cogs Black Mirror risks perpetuating the sense that the viewers are themselves cogs, that the viewers are themselves helpless. There is an uncomfortable kinship between the tv viewing characters of “The National Anthem” and the real world viewer of the episode “The National Anthem” – neither party can look away. Or, to put it more starkly: if you are unable to alter the future why not simply prepare yourself for it by watching more episodes of Black Mirror? At least that way you will know which characters not to imitate.

And yet, despite these critiques, it would be unwise to fully disregard the program. It is easy to pull out comments from the likes of Ellul, Adorno, Horkheimer and Mumford that eviscerate a program such as Black Mirror but it may be more important to ask: given Black Mirror’s shortcomings, what value can the show still have? Here it is useful to recall a comment from Günther Anders (whose pessimism was on par with, or exceeded, any of the aforementioned thinkers) – he was referring in this comment to the works of Kafka, but the comment is still useful:

“from great warnings we should be able to learn, and they should help us to teach others.” (Anders, 98)

This is where Black Mirror can be useful, not as a series that people sit and watch, but as a piece of culture that leads people to put forth the questions that the show jumps over. At its best what Black Mirror provides is a space in which people can discuss their fears and anxieties about technology without worrying that somebody will, farcically, call them a “Luddite” for daring to have such concerns – and for this reason alone the show may be worthwhile. By highlighting the questions that go unanswered in Black Mirror we may be able to put forth the very queries that are rarely made about technology today. It is true that the reflections seen by staring into Black Mirror are dark, warped and unappealing – but such reflections are only worth something if they compel audiences to rethink their relationships to the black mirrored surfaces in their lives today and which may be in their lives tomorrow. After all, one can look into the mirror in order to see the dirt on one’s face or one can look in the mirror because of a narcissistic urge. The program certainly has the potential to provide a useful reflection, but as with the technology depicted in the show, it is all too easy for such a potential reception to be “bungled.”

If we are spending too much time gazing at black mirrors, is the solution really to stare at Black Mirror?

The show may be a satire, but if all people do is watch, then the joke is on the audience.

_____

Zachary Loeb is a writer, activist, librarian, and terrible accordion player. He earned his MSIS from the University of Texas at Austin, and is currently working towards an MA in the Media, Culture, and Communications department at NYU. His research areas include media refusal and resistance to technology, ethical implications of technology, infrastructure and e-waste, as well as the intersection of library science with the STS field. Using the moniker “The Luddbrarian,” Loeb writes at the blog Librarian Shipwreck. He is a frequent contributor to The b2 Review Digital Studies section.

Back to the essay

_____

Works Cited

- Adorno, Theodor and Horkheimer, Max. Dialectic of Enlightenment: Philosophical Fragments. Stanford: Stanford University Press, 2002.

- Anders, Günther. Franz Kafka. New York: Hilary House Publishers LTD, 1960.

- Benjamin, Walter. Walter Benjamin: Selected Writings. Volume 3, 1935-1938. Cambridge: The Belknap Press, 2002.

- Ellul, Jacques. The Technological Society. New York: Vintage Books, 1964.

- Mumford, Lewis. The Story of Utopias. Bibliobazaar, 2008.