a review of Timothy Wu, The Curse of Bigness: Antitrust in the New Gilded Age (Random House/Columbia Global Reports, 2018)

by Richard Hill

~

Tim Wu’s brilliant new book analyses in detail one specific aspect and cause of the dominance of big companies in general and big tech companies in particular: the current unwillingness to modernize antitrust law to deal with concentration in the provision of key Internet services. Wu is a professor at Columbia Law School, and a contributing opinion writer for the New York Times. He is best known for his work on Net Neutrality theory. He is author of the books The Master Switch and The Attention Merchants, along with Network Neutrality, Broadband Discrimination, and other works. In 2013 he was named one of America’s 100 Most Influential Lawyers, and in 2017 he was named to the American Academy of Arts and Sciences.

What are the consequences of allowing unrestricted growth of concentrated private power, and abandoning most curbs on anticompetitive conduct? As Wu masterfully reminds us:

We have managed to recreate both the economics and politics of a century ago – the first Gilded Age – and remain in grave danger of repeating more of the signature errors of the twentieth century. As that era has taught us, extreme economic concentration yields gross inequality and material suffering, feeding an appetite for nationalistic and extremist leadership. Yet, as if blind to the greatest lessons of the last century, we are going down the same path. If we learned one thing from the Gilded Age, it should have been this: The road to fascism and dictatorship is paved with failures of economic policy to serve the needs of the general public. (14)

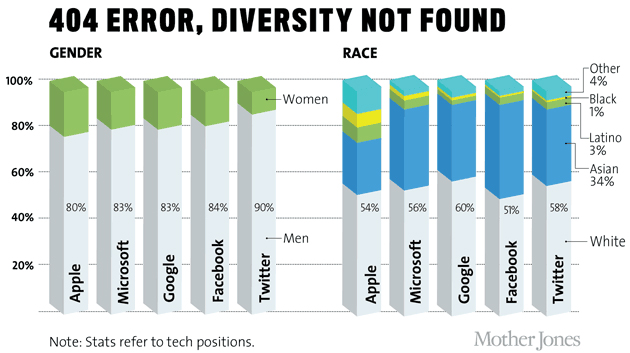

While increasing concentration, and its negative effects on social equity, is a general phenomenon, it is particularly concerning for what regards the Internet: “Most visible in our daily lives is the great power of the tech platforms, especially Google, Facebook, and Amazon, who have gained extraordinary power over our lives. With this centralization of private power has come a renewed concentration of wealth, and a wide gap between the rich and poor” (15). These trends have very real political effects: “The concentration of wealth and power has helped transform and radicalize electoral politics. As in the Gilded Age, a disaffected and declining middle class has come to support radically anti-corporate and nationalist candidates, catering to a discontent that transcends party lines” (15). “What we must realize is that, once again, we face what Louis Brandeis called the ‘Curse of Bigness,’ which, as he warned, represents a profound threat to democracy itself. What else can one say about a time when we simply accept that industry will have far greater influence over elections and lawmaking than mere citizens?” (15). And, I would add, what have we come to when some advocate that corporations should have veto power over public policies that affect all of us?

Surely it is, or should be, obvious that current extreme levels of concentration are not compatible with the premises of social and economic equity, free competition, or democracy. And that “the classic antidote to bigness – the antitrust and other antimonopoly laws – might be recovered and updated to face the challenges of our times” (16). Those who doubt these propositions should read Wu’s book carefully, because he shows that they are true. My only suggestion for improvement would be to add a more detailed explanation of how network effects interact with economies of scale to favour concentration in the ICT industry in general, and in telecommunications and the Internet in particular. But this topic is well explained in other works.

As Wu points out, antitrust law must not be restricted (as it is at present in the USA) “to deal with one very narrow type of harm: higher prices to consumers” (17). On the contrary, “It needs better tools to assess new forms of market power, to assess macroeconomic arguments, and to take seriously the link between industrial concentration and political influence” (18). The same has been said by other scholars (e.g. here, here, here and here), by a newspaper, an advocacy group, a commission of the European Parliament, a group of European industries, a well-known academic, and even by a plutocrat who benefitted from the current regime.

Do we have a choice? Can we continue to pretend that we don’t need to adapt antitrust law to rein in the excessive power of the Internet giants? No: “The alternative is not appealing. Over the twentieth century, nations that failed to control private power and attend to the economic needs of their citizens faced the rise of strongmen who promised their citizens a more immediate deliverance from economic woes” (18). (I would argue that any resemblance to the election of US President Trump, to the British vote to leave the European Union, and to the rise of so-called populist parties in several European countries [e.g. Hungary, Italy, Poland, Sweden] is not coincidental).

Chapter One of Wu’s book, “The Monopolization Movement,” provides historical background, reminding us that from the late nineteenth through the early twentieth century, dominant, sector-specific monopolies emerged and were thought to be an appropriate way to structure economic activity. In the USA, in the early decades of the twentieth century, under the Trust Movement, essentially every area of major industrial activity was controlled or influenced by a single man (but not the same man for each area), e.g. Rockefeller and Morgan. “In the same way that Silicon Valley’s Peter Thiel today argues that monopoly ‘drives progress’ and that ‘competition is for losers,’ adherents to the Trust Movement thought Adam Smith’s fierce competition had no place in a modern, industrialized economy” (26). This system rapidly proved to be dysfunctional: “There was a new divide between the giant corporation and its workers, leading to strikes, violence, and a constant threat of class warfare” (30). Popular resistance mobilized in both Europe and the USA, and it led to the adoption of the first antitrust laws.

Chapter Two, “The Right to Live, and Not Merely to Exist,” reminds us that US Supreme Court Justice Louis Brandeis “really cared about … the economic conditions under which life is lived, and the effects of the economy on one’s character and on the nation’s soul” (33). The chapter outlines Brandeis’ career and what motivated him to combat monopolies.

In Chapter Three, “The Trustbuster,” Wu explains how the 1901 assassination of US President McKinley, a devout supporter of unrestricted laissez-faire capitalism (“let well enough alone”, reminiscent of today’s calls for government to “do not harm” through regulation, and to “don’t fix it if it isn’t broken”), resulted in a fundamental change in US economic policy, when Theodore Roosevelt succeeded him. Roosevelt’s “determination that the public was ruler over the corporation, and not vice versa, would make him the single most important advocate of a political antitrust law.” (47). He took on the great US monopolists of the time by enforcing the antitrust laws. “To Roosevelt, economic policy did not form an exception to popular rule, and he viewed the seizure of economic policy by Wall Street and trust management as a serious corruption of the democratic system. He also understood, as we should today, that ignoring economic misery and refusing to give the public what they wanted would drive a demand for more extreme solutions, like Marxist or anarchist revolution” (49). Subsequent US presidents and authorities continued to be “trust busters”, through the 1990s. At the time, it was understood that antitrust was not just an economic issue, but also a political issue: “power that controls the economy should be in the hands of elected representatives of the people, not in the hands of an industrial oligarchy” (54, citing Justice William Douglas). As we all know, “Increased industrial concentration predictably yields increased influence over political outcomes for corporations and business interests, as opposed to citizens or the public” (55). Wu goes on to explain why and how concentration exacerbates the influence of private companies on public policies and undermines democracy (that is, the rule of the people, by the people, for the people). And he outlines why and how Standard Oil was broken up (as opposed to becoming a government-regulated monopoly). The chapter then explains why very large companies might experience disecomonies of scale, that is, reduced efficiency. So very large companies compensate for their inefficiency by developing and exploiting “a different kind of advantages having less to do with efficiencies of operation, and more to do with its ability to wield economic and political power, by itself or conjunction with others. In other words, a firm may not actually become more efficient as it gets larger, but may become better at raising prices or keeping out competitors” (71). Wu explains how this is done in practice. The rest of this chapter summarizes the impact of the US presidential election of 1912 on US antitrust actions.

Chapter Four, “Peak Antitrust and the Chicago School,” explains how, during the decades after World War II, strong antitrust laws were viewed as an essential component of democracy; and how the European Community (which later became the European Union) adopted antitrust laws modelled on those of the USA. However, in the mid-1960s, scholars at the University of Chicago (in particular Robert Bork) developed the theory that antitrust measures were meant only to protect consumer welfare, and thus no antitrust actions could be taken unless there was evidence that consumers were being harmed, that is, that a dominant company was raising prices. Harm to competitors or suppliers was no longer sufficient for antitrust enforcement. As Wu shows, this “was really laissez-faire reincarnated.”

Chapter Five, “The Last of the Big Cases,” discusses two of the last really large US antitrust case. The first was breakup of the regulated de facto telephone monopoly, AT&T, which was initiated in 1974. The second was the case against Microsoft, which started in 1998 and ended in 2001 with a settlement that many consider to be a negative turning point in US antitrust enforcement. (A third big case, the 1969-1982 case against IBM, is discussed in Chapter Six.)

Chapter Six, “Chicago Triumphant,” documents how the US Supreme Court adopted Bork’s “consumer welfare” theory of antitrust, leading to weak enforcement. As a consequence, “In the United States, there have been no trustbusting or ‘big cases’ for nearly twenty years: no cases targeting an industry-spanning monopolist or super-monopolist, seeking the goal of breakup” (110). Thus, “In a run that lasted some two decades, American industry reached levels of industry concentration arguably unseen since the original Trust era. A full 75 percent of industries witnessed increased concentration from the years 1997 to 2012” (115). Wu gives concrete examples: the old AT&T monopoly, which had been broken up, has reconstituted itself; there are only three large US airlines; there are three regional monopolies for cable TV; etc. But the greatest failure “was surely that which allowed the almost entirely uninhibited consolidation of the tech industry into a new class of monopolists” (118).

Chapter Seven, “The Rise of the Tech Trusts,” explains how the Internet morphed from a very competitive environment into one dominated by large companies that buy up any threatening competitor. “When a dominant firm buys a nascent challenger, alarm bells are supposed to ring. Yet both American and European regulators found themselves unable to find anything wrong with the takeover [of Instagram by Facebook]” (122).

The Conclusion, “A Neo-Brandeisian Agenda,” outlines Wu’s thoughts on how to address current issues regarding dominant market power. These include renewing the well known practice of reviewing mergers; opening up the merger review process to public comment; renewing the practice of bringing major antitrust actions against the biggest companies; breaking up the biggest monopolies, adopting the market investigation law and practices of the United Kingdom; recognizing that the goal of antitrust is not just to protect consumers against high prices, but also to protect competition per se, that is to protect competitors, suppliers, and democracy itself. “By providing checks on monopoly and limiting private concentration of economic power, the antitrust law can maintain and support a different economic structure than the one we have now. It can give humans a fighting chance against corporations, and free the political process from invisible government. But to turn the ship, as the leaders of the Progressive era did, will require an acute sensitivity to the dangers of the current path, the growing threats to the Constitutional order, and the potential of rebuilding a nation that actually lives up to its greatest ideals” (139).

In other words, something is rotten in the state of the Internet: it has “

Wu’s call for action is not just opportune, but necessary and important; at the same time, it is not sufficient.

_____

Richard Hill is President of the Association for Proper internet Governance, and was formerly a senior official at the International Telecommunication Union (ITU). He has been involved in internet governance issues since the inception of the internet and is now an activist in that area, speaking, publishing, and contributing to discussions in various forums. Among other works he is the author of The New International Telecommunication Regulations and the Internet: A Commentary and Legislative History (Springer, 2014). He writes frequently about internet governance issues for The b2o Review Digital Studies magazine.