Moira Weigel

This essay has been peer-reviewed by “The New Extremism” special issue editors (Adrienne Massanari and David Golumbia), and the b2o: An Online Journal editorial board.

Since the election of Donald Trump, a growing body of research has examined the role of digital technologies in new right wing movements (Lewis 2018; Hawley 2017; Neiwert 2017; Nagle 2017). This article will explore a distinct, but related, subject: new right wing tendencies within the tech industry itself. Our point of entry will be an improbable document: a German language dissertation submitted by an American to the faculty of social sciences at J. W. Goethe University of Frankfurt in 2002. Entitled Aggression in the Life-World, the dissertation aims to describe the role that aggression plays in social integration, or the set of processes that lead individuals in a given society to feel bound to one another. To that end, it offers a “systematic” reinterpretation of Theodor Adorno’s Jargon of Authenticity (1973). It is of interest primarily because of its author: Alexander C. Karp.[1]

Karp, as some readers may know, did not pursue a career in academia. Instead, he became the CEO of the powerful and secretive data analytics company, Palantir Technologies. His dissertation has inspired speculation for years, but no journalist or scholar has yet analyzed it. Doing so, I will argue that it offers insight into the intellectual formation of an influential network of actors in and around Silicon Valley, a network articulating ideas and developing business practices that challenge longstanding beliefs about how Silicon Valley thinks and works.

For decades, a view prevailed that the politics of both digital technologies and most digital technologists were liberal, or neoliberal, depending on how critically the author in question saw them. Liberalism and neoliberalism are complex and contested concepts. But broadly speaking, digital networks have been seen as embodying liberal or neoliberal logics insofar as they treated individuals as abstractly equal, rendering social aspects of embodiment like race and gender irrelevant, and allowing users to engage directly in free expression and free market competition (Kolko and Nakamura, 2000; Chun 2005, 2011, 2016). The ascendance of the Bay Area tech industry over competitors in Boston or in Europe was explained as a result of its early adoption of new forms of industrial organization, built on flexible, short-term contracts and a strong emotional identification between workers and their jobs (Hayes 1989; Saxenian 1994).

Technologists themselves were said to embrace a new set of values that the British media theorists Richard Barbrook and Andy Cameron dubbed the “Californian Ideology.” This “anti-statist gospel of cybernetic libertarianism… promiscuously combine[d] the free-wheeling spirit of the hippies and the entrepreneurial zeal of the yuppies,” they wrote; it answered the challenge posed by the social liberalism of the New Left by “resurrecting economic liberalism” (1996, 42 & 47). Fred Turner attributed this synthesis to the “New Communalists,” members of the counterculture who “turn[ed] away from questions of gender, race, and class, and toward a rhetoric of individual and small group empowerment” (2006, 97). Nonetheless, he reinforced the broad outlines that Barbrook and Cameron had sketched. Turner further showed that midcentury critiques of mass media, and their alleged tendency to produce authoritarian subjects, inspired faith that digital media could offer salutary alternatives—that “democratic surrounds” would sustain democracy by facilitating the self-formation of democratic subjects (2013).

Silicon Valley has long supported Democratic Party candidates in national politics and many tech CEOs still subscribe to the “hybrid” values of the Californian Ideology (Brookman et al. 2019). However, in recent years, tensions and contradictions within Silicon Valley liberalism, particularly between commitments to social and economic liberalism, have become more pronounced. In the wake of the 2016 presidential election, several software engineers emerged as prominent figures on the “alt-right,” and newly visible white nationalist media entrepreneurs reported that they were drawing large audiences from within the tech industry.[2] The leaking of information from internal meetings at Google to digital outlets like Breitbart and Vox Popoli suggests that there was at least some truth to their claims (Tiku 2018). Individual engineers from Google, YouTube, and Facebook have received national media attention after publicly criticizing the liberal culture of their (former) workplaces and in some cases filing lawsuits against them.[3] And Republican politicians, including Trump (2019a, 2019b), have cited these figures as evidence of “liberal bias” at tech firms and the need for stronger government regulation (Trump 2019a; Kantrowitz 2019).

Karp’s Palantir cofounder (and erstwhile roommate) Peter Thiel looms large in an emerging constellation of technologists, investors, and politicians challenging what they describe as hegemonic social liberalism in Silicon Valley. Thiel has been assembling a network of influential “contrarians” since he founded the Stanford Review as an undergraduate in the late 1980s (Granato 2017). In 2016, Thiel became a highly visible supporter of Donald Trump, speaking at the Republican National Convention, donating $1.25 million in the final weeks of Trump’s campaign for president (Streitfeld 2016a), and serving as his “tech liaison” during the transition period (Streitfeld 2016b). (Earlier in the campaign, Thiel had donated $1 million to the Defeat Crooked Hillary Super PAC backed by Robert Mercer, and overseen by Steve Bannon and Kellyanne Conway; see Green 2017, 200.) Since 2016, he has met with prominent figures associated with the alt-right and “neoreaction”[4] and donated at least $250,000 to support Trump’s reelection in 2020 (Federal Election Commission 2018). He has also given to Trump allies including Missouri Senator Josh Hawley, who has repeatedly attacked Google and Facebook and sponsored multiple bills to regulate tech platforms, citing the threat that they pose to conservative speech.[5]

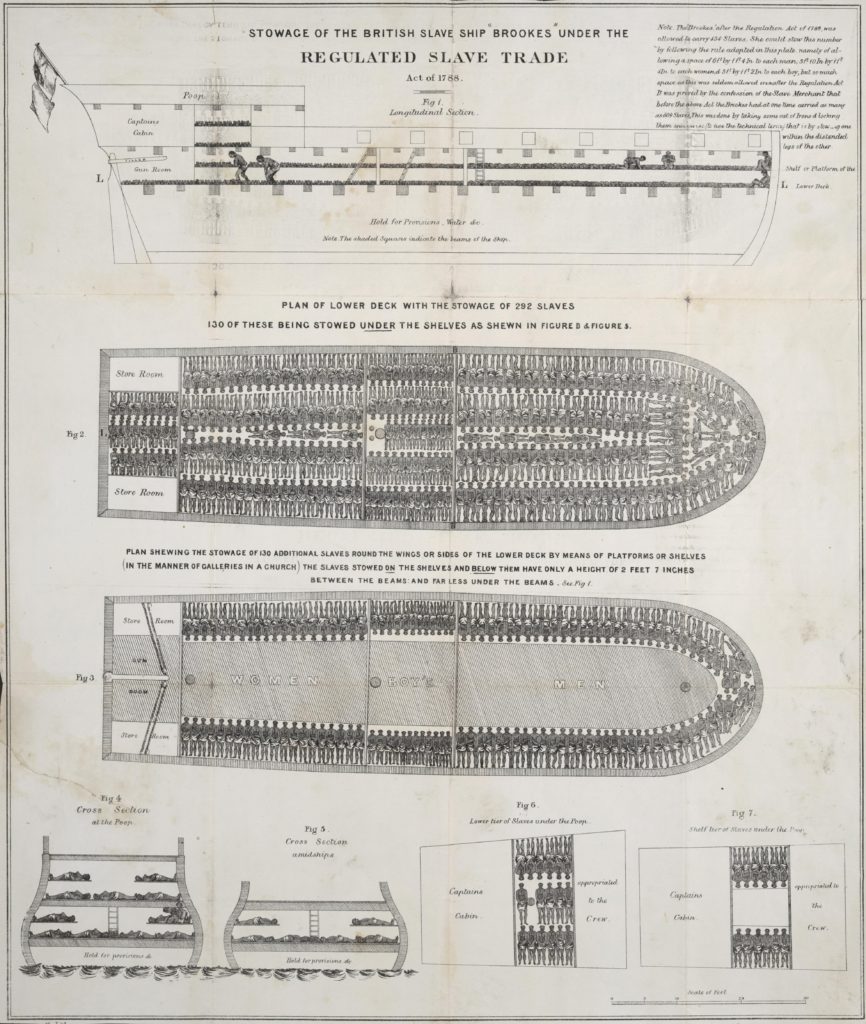

Thiel’s affinity with Trumpism is not merely personal or cultural; it aligns with Palantir’s business interests. According to a 2019 report by Mijente, since Trump came into office in 2017, Palantir contracts with the United States government have increased by over a billion dollars per year. These include multiyear contracts with the US military (Judson 2019; Hatmaker 2019) and with Immigrations and Customs Enforcement (ICE) (MacMillan and Dwoskin 2019); Palantir has also worked with police departments in New York, New Orleans, and Los Angeles (Alden 2017; Winston 2018; Harris 2018).

Karp and Thiel have both described these controversial contracts using the language of “nation” and “civilization.” Confronted by critical journalistic coverage (Woodman 2017, Winston 2018, Ahmed 2018) and protests (Burr 2017, Wiener 2017), as well as internal actions by concerned employees (MacMillan and Dwoskin, 2019), Thiel and Karp have doubled down, characterizing the company as “patriotic,” in contrast to its competitors. In an interview conducted at Davos in January 2019, Karp said that Silicon Valley companies that refuse to work with the US government are “borderline craven” (2019b). At a speech at the National Conservatism Conference in July 2019, Thiel called Google “seemingly treasonous” for doing business with China, suggested that the company had been infiltrated by Chinese agents, and called for a government investigation (Thiel 2019a). Soon after, he published an Op Ed in the New York Times that restated this case (Thiel 2019b).

However, Karp has cultivated a very different public image from Thiel’s, supporting Hillary Clinton in 2016, saying that he would vote for any Democratic presidential candidate against Trump in 2020 (Chafkin 2019), and—most surprisingly—identifying himself as a Marxist or “neo-Marxist” (Waldman et al. 2018, Mac 2017, Greenberg 2013). He also refers to himself as a “socialist” (Chafkin 2019) and according to at least one journalist, regularly addresses his employees on Marxian thought (Greenberg 2013). On one level, Karp’s dissertation clarifies what he means by this: For a time, he engaged deeply with the work of several neo-Marxist thinkers affiliated with the Institute for Social Research in Frankfurt. On another level, however, Karp’s dissertation invites further perplexity, because right wing movements, including Trump’s, evince special antipathy for precisely that tradition.

Starting in the early 1990s, right-wing think tanks in both Germany and the United States began promoting conspiratorial narratives about critical theory. The conspiracies allege that, ever since the failure of “economic Marxism” in World War I, “neo-“ or “cultural Marxists” have infiltrated academia, media, and government. From inside, they have carried out a longstanding plan to overthrow Western civilization by criticizing Western culture and imposing “political correctness.” To the extent that it attaches to real historical figures, the story typically begins with Antonio Gramsci and György Lukács, goes through Max Horkheimer, Theodor Adorno, and other wartime émigrés to the United States, particularly those involved in state-sponsored mass media research, and ends abruptly with Herbert Marcuse and his influence on student movements of the 1960s (Moyn 2018; Huyssen 2017; Jay 2011; Berkowitz 2003).

The term “Cultural Marxism” directly echoes the Nazi theory of “Cultural Bolshevism”; the early proponents of the Cultural Marxism conspiracy theory were more or less overt antisemites and white nationalists (Berkowitz 2003). However, in the 2000s and 2010s, right wing politicians and media personalities helped popularize it well beyond that sphere.[6] During the same time, it has gained traction in Silicon Valley, too. In recent years, several employees at prominent tech firms have publicly decried the influence of Cultural Marxists, while making complaints about “political correctness” or lack of “viewpoint diversity.”[7]

Thiel has long expressed similar frustrations.[8] So how is it that this prominent opponent of “cultural Marxism” works with a self-described neo-Marxist CEO? Aggression in the Life World casts light on the core beliefs that animate their partnership. The idiosyncratic adaptation of Western Marxism that it advances does not in fact place Karp at odds with the nationalist projects that Thiel has advocated, and Palantir helps enact. On the contrary, by attempting to render critical theoretical concepts “systematic,” Karp reinterprets them in a way that legitimates the work he would go on to do. Shortly before Palantir began developing its infrastructure for identification and authentication, Aggression in the Life-World articulated an ideology of these processes.

Freud Returns to Frankfurt

Tech industry legend has it that Karp wrote his dissertation under Jürgen Habermas (Silicon Review 2018; Metcalf 2016; Greenberg 2013). In fact, he earned his doctorate from a different part of Goethe University than the one in which Habermas taught: not at the Institute for Social Research but in the Division of Social Sciences. Karp’s primary reader was the social psychologist Karola Brede, who then held a joint appointment at Goethe University’s Sociology Department and at the Sigmund Freud Institute; she and her younger colleague Hans-Joachim Busch appear listed as supervisors on the front page. The confusion is significant, and not only because it suggests an exaggeration. It also obscures important differences of emphasis and orientation between Karp’s advisors and Habermas. These differences directly shaped Karp’s graduate work.

Habermas did engage with psychoanalysis early in his career. In the spring and summer of 1959, he attended every one of a series of lectures organized by the Institute for Social Research to mark the centenary of Freud’s birth (Müller-Doohm 2016, 79; Brede and Mitscherlich-Nielsen 1996, 391). He went on to become close friends and even occasionally co-teach (Brede and Mitscherlich-Nielsen 1996, 395) with one of the organizers and speakers of this series, Alexander Mitscherlich, who had long campaigned with Frankfurt School founder Max Horkheimer for the funds to establish the Sigmund Freud Institute and became the first director when it opened the following year. In 1968, shortly after Mitscherlich and his wife, Margarete, published their influential book, The Inability to Mourn, Habermas developed his first systemic critical social theory in Knowledge and Human Interests (1972). Nearly one third of that book is devoted to psychoanalysis, which Habermas treats as exemplary of knowledge constituted by the “critical” or “emancipatory interest”—that is, the species interest in engaging in critical reflection in order to overcome domination. However, in the 1970s, Habermas turned away from that book’s focus on philosophical anthropology toward the ideas about linguistic competence that culminated in his Theory of Communicative Action; in 1994, Margarete Mitscherlich recounted that Habermas had “gotten over” psychoanalysis in the process of writing that book (1996, 399). Karp’s interest in the theory of the drives, and in aggression in particular, was not drawn from Habermas but from scholars at the Freud Institute, where it was a major focus of research and public debate for decades.

Freud himself never definitively decided whether he believed that a death drive existed. The historian Dagmar Herzog has shown that the question of aggression—and particularly the question of whether human beings are innately driven to commit destructive acts—dominated discussions of psychoanalysis in West Germany in the 1960s and 1970s. “In no other national context would the attempt to make sense of aggression become such a core preoccupation,” Herzog writes (2016, 124). After fascism, this subject was highly politicized. For some, the claim that aggression was a primary drive helped to explain the Nazi past: if all humans had an innate drive to commit violence, Nazi crimes could be understood as an extreme example of a general rule. For others, this interpretation risked naturalizing and normalizing Nazi atrocities. “Sex-radicals” inspired by the work of Wilhelm Reich pointed out that Freud had cited the libido as the explanation for most phenomena in life. According to this camp, Nazi aggression had been the result not of human nature but of repressive authoritarian socialization. In his own work, Mitscherlich attempted to elaborate a series of compromises between the conservative position (that hierarchy and aggression were natural) and the radical one (that new norms of anti-authoritarian socialization could eliminate hierarchy entirely; Herzog 2016, 128-131). Klaus Horn, the long-time director of the division of social psychology at the Freud Institute, whose collected writings Karp’s supervisor Hans-Joachim Busch edited, contested the terms of the disagreement. The entire point of sophisticated psychoanalysis, Horn argued, was that culture and biology were mutually constitutive and interacted continuously; to name one or the other as the source of human behavior was nonsensical (Herzog 2016, 135).

Karp’s primary advisor, Karola Brede, who joined the Sigmund Freud Institute in 1967, began her career in the midst of these debates (Bareuther et al. 1989, 713). In her first book, published in 1972, Brede argued that “psychosomatic” disturbances had to be understood in the context of socialization processes. Not only did neurotic conflicts play a role in somatic illness; such illness constituted “socio-pathological” expressions of an increase in the forms of repression required to integrate individuals into society (Brede 1972). In 1976, Brede published a critique of Konrad Lorenz, whose bestselling work, On Aggression, had triggered much of the initial debate with Alexander Mitscherlich and others at the Institute, in the journal Psyche (“Der Trieb als humanspezifische Kategorie”; see Herzog 2016, 125-7). Since the 1980s, her monographs have focused on work and workplace sociology, and on the role that psychoanalysis should play in critical social theory. Individual and Work (1986) explored the “psychoanalytic costs involved in developing one’s own labor power.” The Adventures of Adjusting to Everyday Work (1995) drew on empirical studies of German workplaces to demonstrate that psychodynamic processes played a key role in professional life, shaping processes of identity formation, authoritarian behavior, and gendered self-identity in the workplace. In that book, Brede criticizes Habermas for undervaluing psychoanalytic concepts—and unconscious aggression in particular—as social forces. Brede argues that the importance that Habermas assigned to “intention” in Theory of Communicative Action prevented him from recognizing the central role that the unconscious played in constituting identity, action, and subjectivity (1995, 223 & 225). At the same time, she was editing multiple volumes on psychoanalytic theory, including feminist perspectives in psychoanalysis, and in a series of journal articles in the 1990s, developed a focus on antisemitism and Germany’s relationship to its troubled history (Brede 1995, 1997, 2000).

During his time as a PhD student, Karp seems to have worked very closely with Brede. The sole academic journal article that he published he co-authored with her in 1997. (An analysis of Daniel Goldhagen’s bestselling 1996 study, Hitler’s Willing Executioners, the article attempted to build on Goldhagen’s thesis by characterizing a specific, “eliminationist” form of antisemitism that Karp and Brede argued could only be understood from the perspective of Freudian psychoanalytic theory; see Brede and Karp 1997, 621-6.) Karp wrote the introduction for a volume of the Proceedings of the Freud Institute, which Brede edited (Brede et al. 1999, 5-7). The chapter that Karp contributed to that volume would appear in his dissertation, three years later, in almost identical form. Karp’s dissertation itself also closely followed the themes of Brede’s research.

Aggression in the Life World

The full title of Karp’s dissertation captures its patchwork quality: Aggression in the Life-World: Expanding Parsons’ Concept of Aggression Through a Description of the Connection Between Jargon, Aggression, and Culture. “This work began,” the opening sentences recall, “with the observation that many statements have the effect of relieving unconscious drives, not in spite, but because, of the fact that they are blatantly irrational” (Karp 2002, 2). Karp proposes that such statements provide relief by allowing a speaker to have things both ways: to acknowledge the existence of a social order and, indeed, demonstrate specific knowledge of that order while, at the same time, expressing taboo wishes that contravene social norms. As result, rather than destroy social order, such irrational statements integrate the speaker into society while also providing compensation for the pains of being integrated. To describe these kinds of statements Karp indicates that he will borrow a concept from the late work of Adorno: “jargon.” However, Karp announces that he will critique Adorno for depending too much on the very phenomenological tradition that his Jargon of Authenticity is meant to criticize. Adorno’s concept is not a concept at all, Karp alleges, but a “reservoir for collecting Adorno-poetry” (Sammelbecken Adornoscher Dichtung) (2002, 58). Karp’s own goal is to clarify jargon into an analytical concept that could then be incorporated into a classical sociological framework. As synecdoche for classical sociology, Karp takes the work of Talcott Parsons.

The second chapter of Karp’s dissertation, a reading and critique of Parsons, had appeared in the Freud Institute publication, Cases for the Theory of the Drives. In his editor’s introduction to that volume, Karp had stated that the goal of their group had been to integrate psychoanalytic concepts in general and Freud’s theory of the drives in particular into frameworks provided by classical sociology. The volume begins with an essay by Brede on the failure of sociology as a discipline to account for the role that aggression plays in social integration. (Brede 1999, 11-45, credits Georg Simmel with having developed an account of the active role that aggression played in creating social cohesion; more on that below.) Karp reiterates Brede’s complaint, directing it against Parsons, whose account of aggression he calls “incomplete” or “watered down” (2002, 11). In the version that appears in his dissertation, several sections of literature review establish background assumptions and describe what Karp takes to be Parsons’ achievement: integrating the insights of Émile Durkheim and Sigmund Freud. Taking, from Durkheim, a theory of how societies develop systems of norms, and from Freud, how individuals internalize them, Parsons developed an account of culture as the site where the integration of personality and society takes place.

For Parsons, pace Karp, culture itself is best understood as a system constituted through “interactions.” Karp credits Parsons with shifting the paradigm from a subject of consciousness to a subject in communication—translating the Freudian superego into sociological form, so that it appears, not as a moral enforcer, but as a psychic structure communicating cultural norms to the conscious subject. Yet, Karp protests that there are, in fact, parts of personality not determined by culture, and not visible to fellow members of a culture so long as an individual does not deviate from established norms of interaction. Parsons’ theory of aggression remains incomplete on at least two counts, then. First, Karp argues, Parsons fails to recognize aggression as a primary drive, treating it only as a secondary result that follows when the pleasure principle finds itself thwarted. Karp, by contrast, adopts the position that a drive toward death or destruction is at least as fundamental as the pleasure principle. Second, because Parsons defines aggression in terms of harms to social norms, he cannot explain how aggression itself can become a social norm, as it did in Nazi Germany. For an explanation of how aggressive impulses come to be integrated into society, Karp turns instead to Adorno.

In Adorno’s Jargon of Authenticity, Karp found an account of how aggression constitutes itself in language and, through language, mediates social integration (2002, 57). Adorno’s lengthy essay, which he had originally intended to constitute one part of Negative Dialectics, resists easy summary. The essay begins by identifying theological overtones that, Adorno says, emanate from the language used by German existentialists—and by Martin Heidegger in particular. Adorno cites not only “authenticity,” but terms like “existential,” “in the decision,” “commission,” “appeal,” and “encounter,” as exemplary” (3). While the existentialists claim that such language constitutes a form of resistance to conformity, Adorno argues that it has in fact become highly standardized: “Their unmediated language they receive from a distributor” (14). Making fetishes of these particular terms, the existentialists decontextualize language in several respects. They do so at the level of the sentence—snatching certain, favored words out of the dialectical progression of thought as if meaning could exist without it. At the same time, the existentialist presents “words like ‘being’ as if they were the most concrete terms” and could obviate abstraction, the dialectical movement within language. The function of this rhetorical practice is to make reality seem simply present, and give the subject an illusion of self-presence—replacing consciousness of historical conditions with an illusion of immediate self-experience. The “authenticity” generated by jargon therefore depends on forgetting or repressing the historically objective realities of social domination.

Beyond simply obscuring the realities of domination, Adorno continues, the jargon of authenticity spiritualizes them. For instance, Martin Heidegger turns the real precarity of people who might at any time lose their jobs and homes into a defining condition of Dasein: “The true need for residence consists in the fact that mortals must first learn to reside” (26). The power of such jargon—which transforms the risk of homelessness into an essential trait of Dasein—comes from the fact that it expresses human need, even as it disavows it. To this extent, jargon has an a- or even anti-political character: it disguises current and contingent effects of social domination into eternal and unchangeable characteristics of human existence. “The categories of jargon are gladly brought forward, as though they were not abstracted from generated and transitory situations but rather belonged to the essence of man,” Adorno writes. “Man is the ideology of dehumanization” (48). Jargon turns fascist insofar as it leads the person who uses it to perceive historical conditions of domination—including their own domination—as the very source of their identity. “Identification with that which is inevitable remains the only consolation of this philosophy of consolation.” Adorno writes. “Its dignified mannerism is a reactionary response to the secularization of death” (143, 144).

Karp says at the outset that his goal is to make Adorno’s collection of observations about jargon “systematic.” In order to do so, he approaches the subject from a different perspective than Adorno did: focused on the question of what psychological needs jargon fulfills. For Karp, the achievement of jargon lies in its “double function” (Doppelfunktion). Jargon both acknowledges the objective forces that oppress people and allows people to adapt or accommodate themselves to those same forces by eternalizing them—removing them from the context of the social relations where they originate, and treating them as features of human existence in general. Jargon addresses needs that cannot be satisfied, because they reflect the realities of living in a society characterized by domination, but also cannot be acted upon, because they are taboo. For Karp, insofar as jargon is a kind of speech that designates speakers as belonging to an in-group, it also expresses an unconscious drive toward aggression. In jargon we see the aggression that drives individuals to exclude others from the social world doing its binding work. It is on these grounds that Karp argues that aggression is a constitutive part of jargon—its ever-present, if unacknowledged, obverse.

Karp grants that Adorno is concerned with social life. The Jargon of Authenticity investigates precisely the social function of ontology, or how it turns “authenticity” into a cultural form, circulated within mass culture. Adorno also alludes to the specifically German inheritance of jargon—the resemblance between Heidegger’s celebration of völkisch rural life and Nazi celebration of the same (1973, 3). Yet, Karp argues, Adorno does not provide an account of how a deception or illusion of authenticity came to be a structure in the life-world. Even as he criticizes phenomenological ontology, Adorno relies on a concept of language that is itself phenomenological. Echoing critiques by Axel Honneth (1991) of Horkheimer and Adorno’s failures to account for the unique domain of “the social,” Karp turns to the same thinkers Karola Brede used in her article on “Social Integration and Aggression”: Sigmund Freud and Georg Simmel.

In that article, Brede develops a reading that joins Freud and Simmel’s accounts of the role of the figure of “the stranger” in modern societies. In Civilization and its Discontents, Brede argues, Freud described “strangers” in terms that initially appear incompatible with the account Simmel had put forth in his famous 1908 “Excursus on the Stranger.” Simmel described the mechanisms whereby social groups exclude strangers in order to eliminate danger—thereby controlling the “monstrous reservoir of aggressivity” that would otherwise threaten social structure. (The quote is from Parsons.) Freud wrote that, despite the Biblical commandment to love our neighbors, and the ban on killing, we experience a hatred of strangers, because they make us experience what is strange in us, and fear what in them cannot be fit into our cultural models. Brede concludes that it is only by combining Freudian psychodynamics with Simmel’s account of the role of exclusion in social formation that critical social theory could account for the forms of violence that dominated the history of the twentieth century (Brede 199, 43).

Karp contrasts Adorno with both Freud and Simmel, and finds Adorno to be more pessimistic than either of these predecessors. Compared to Freud, who argued that culture successfully repressed both libidinal and destructive drives in the name of moral principles, Karp writes that Adorno regarded culture as fundamentally amoral. Rather than successfully repressing antisocial drives, Karp writes, late capitalist culture sates its members with “false satisfactions.” People look for opportunities to express their needs for self-preservation. However, since they know that their needs cannot be fully satisfied, they simultaneously fall over themselves to destroy the memory of the false fulfillment they have had. Repressed awareness of the false nature of their own satisfaction produces the ambient aggression that people take out on strangers.

For Simmel, the stranger is part of all modern societies, Karp writes. For Adorno, the stranger extends an invitation to violence. Jargon gains its power from the fact that those who speak, and hear, it really are searching for a lost community. The very presence of the stranger demonstrates that such community cannot be simply given; jargon is powerful precisely in proportion to how much the shared context of life has been destroyed. It therefore offers a “dishonest answer to an honest longing” for intersubjectivity, gaining strength in proportion to the intensity the need that has been thwarted (Karp 2002, 85). Wishes that contradict social norms are brought into the web of social relations (Geflecht der Lebenswelt), in such a way that they do not need to be sanctioned or punished for violating social norms (91). On the contrary, they serve to bind members of social groups to one another.

Testing Jargon

As a case study to demonstrate the usefulness of his modified concept of jargon, Karp takes up a notorious episode in post-wall German intellectual history: a speech that the celebrated novelist Martin Walser gave in October 1998, at St. Paul’s Church in Frankfurt. The occasion was Walser’s acceptance of the 1998 Peace Prize of the German Book Trade. The novelist had traveled a complex political itinerary by the late 1990s. Documents released in 2007 would uncover the fact that as a teenager, during the final years of the Second World War, Walser joined the Nazi Party and fought as a member of the Wehrmacht. But he first became publicly known as a left-wing writer. In the 1950s, Walser attended meetings of the informal but influential German writer’s association Gruppe 47 and received their annual literary prize for his short story, “Templones Ende”; in 1964 he attended the Frankfurt Auschwitz trials, where low ranking officials were charged and convicted for crimes that they had perpetrated during the Holocaust. In his 1965 essay about that experience, “Our Auschwitz,” Walser insisted on the collective responsibility of Germans for the horrors of the Nazi period; indeed he criticized the emphasis on spectacular cruelty at the trial, and in the media, to the extent that this emphasis allowed the public to maintain an imaginary distance between themselves and the Nazi past (Walser 2015, 217-56). Walser supported Social Democratic Party member Willy Brandt for Chancellor and even joined the German Communist Party during that decade. By the 1980s, however, Walser was widely perceived to have migrated back to the right. And when he gave his speech “Experiences Composing a Sermon” on the sixtieth anniversary of Kristallnacht, he used the occasion to attack the public culture of Holocaust remembrance. Walser described this culture as a “moral cudgel” or “bludgeon” (Moralkeule).

“Experiences Composing a Sermon” adopts a stream of consciousness, rather than argumentative, style in order to explain why Walser refused to do what he said was expected of him: to speak about the ugliness of German history. Instead, he argued that no further collective memorialization of the Holocaust was necessary. There was no such thing, he said, as collective or shared conscience at all: conscience should be a private matter. Critics and intellectuals he disparaged as “preachers” were “instrumentalizing” and “vulgarizing” memory, when they exhorted the public constantly to reflect on the crimes of the Nazi period. “There is probably such a thing as the banality of good,” Walser quipped, echoing Hannah Arendt (2015, 513). He did not spell out what ends he thought that these “preachers” aimed to instrumentalize German guilt for. He concluded by abruptly calling on the newly elected president Roman Herzog, who was in attendance, to free the former East German spy, Rainer Rupp, from prison. Walser’s speech received a standing ovation—though not, notably, from Ignatz Bubis, then the president of the Central Council of Jews in Germany, who was also in attendance. The next day, in the Frankfurter Allgemeine Zeitung, Bubis called the speech an act of “intellectual arson” (geistiges Brandstiftung). The controversy that followed generated a huge amount of debate among German intellectuals and in the German and international media (Cohen 1998). Two months later, the offices of the Frankfurter Allgemeine Zeitung hosted a formal debate between the two men. It lasted for four hours. FAZ published a transcript of their conversation in a special supplement (Walser and Bubis 1999).

In February and March 1999, Karola Brede delivered two lectures about the controversy at Harvard University, which she subsequently published in Psyche (2000, 203-33). Brede examined both the text of Walser’s original speech and the transcript of his debate with Bubis in order to determine, first, why Walser’s speech had been received so enthusiastically, and second, whether Walser, despite eschewing explicitly antisemitic language, had in fact “taken the side of anti-Semites.” In order to explain why Walser’s speech had attracted so much attention, Brede carried out a close textual analysis. She found that, although Walser had not presented a very cogent argument, he had successfully staged a “relieving rhetoric” (Entlastungsrhetorik) that freed his audience from the sense of awkwardness or self-consciousness that they felt talking about Auschwitz in public and replaced these negative feelings with a positive sense of heightened self-regard. Brede argued that Walser used jargon, in the sense of Adorno’s “jargon of authenticity,” in order to flatter listeners into thinking that they were taking part in a daring intellectual exercise, while in fact activating anti-intellectual feelings. (In a footnote she recommended an “unpublished paper” by Karp, presumably from his dissertation, for further reading; Brede 2000, 215). She concluded that indeed Walser had taken the side of antisemites because, in both his speech and his subsequent debate with Bubis, he constructed a point of identification for listeners (“we Germans”) that systematically excluded German Jews (203). By organizing his speech entirely around “perpetrators” and the “critics” who shamed them, Walser elided the perspective of the Nazi’s victims. Invoking Simmel’s essay on “The Stranger” again, Brede argued that Walser’s behavior during his debate with Bubis offered a model of how unconscious aggression could drive social integration through exclusion. Regardless of what Walser said he felt, to the extent that his rhetoric excluded Bubis from his definition of “we Germans” as a Jew, his conduct had been antisemitic.

In the final chapter of his dissertation, Karp also offers a reading of Walser’s prize acceptance speech, arguing that Walser made use of jargon in Adorno’s sense. Like Brede, Karp bases his argument on close textual analysis. He catalogs several specific literary strategies that, he says, enabled Walser to appeal to the unconscious or repressed emotions of his listeners without having to convince them. First, Karp tracks how Walser played with pronouns in the opening movement of the speech in order to eliminate distance and create identification between himself and his audience. Walser shifted from describing himself in the third person singular (the “one who had been chosen” for the prize) to the first-person plural (“we Germans”). At the same time, by making vague references to intellectuals who had made public remembrance and guilt compulsory, Walser created the sensation that he and the listeners he has invited to identify with his position (“we”) were only responding to attacks from outside—that “we” were the real victims. (In her article, Brede had quipped that this narrative of victimhood “could have come from a B-movie Western”; Brede 2000, 214). Through this technique, Karp writes, Walser created the impression that if “we” were to strike back against the “Holocaust preachers,” this would only be an act of self-defense.

Karp stresses that the content of “Experiences Composing a Sermon” was less important than the effect that these rhetorical gestures had of making listeners feel that they belonged to Walser’s side. In the controversy that followed Walser’s acceptance speech, critics often asked which “intellectuals” he had meant to criticize; these critics, Karp says, missed the point. It was not the content of the speech, but its form, that mattered. It was through form that Walser had identified and addressed the psychological needs of his audience. That form did not aim to convince listeners; it did not need to. It simply appealed to (repressed) emotions that they were already experiencing.

For Adorno, the anti-political or fascist character of jargon was directly tied to the non-dialectical concept of language that jargon advanced. By eliminating abstraction from philosophical language, and detaching selected words from the flow of thought, jargon made absent things seem present. By using such language, existentialism attempted to construct an illusion that the subject could form itself outside of history. By raising historically contingent experiences of domination to defining features of the human, jargon presented them as unchangeable. And by identifying humanity itself with those experiences, it identified the subject with domination.

Karp does not demonstrate that Walser’s “jargon” performed any of these functions, precisely. Rather, he focuses on the psychodynamics motivating his speech. Karp proposes that the pain (Leiden) that Walser’s speech expressed resembled the “domination” (Zwang) that Adorno recognized in jargon. While Adorno’s jargon made the absent or abstract seem present, through an act of linguistic fetishization, Walser’s jargon embodied the obverse impulse: to wish the discomfort created by the presence of history’s victims away.

Karp is less concerned with the history of domination, that is, than with Freudian drives. For Adorno, the purpose of carrying out a determinate negation of jargon was to create the conditions of possibility for critical theory to address the real needs to which jargon constituted a false response. For Karp, the interest of the project is more technical: his goal is to uncover forms and patterns of speech that admit aggression into social life and give it a central role in consolidating identity. By combining culturally legitimated expressions with taboo ones, Karp argues, Walser created an environment in which his controversial opinion could be accepted as “obvious” or “self-evident” (selbstverständlich) by his audience. That is, Walser created a linguistic form through which aggression could be integrated into the life-world.

Unlike Adorno (or Brede), Karp refrains from making any normative assessment of this achievement. His “systematization” of the concept of jargon empties that concept of the critical force that Adorno meant for it to carry. If anything, the tone of the final pages of Aggression in the Life-World is forgiving. Karp concludes by arguing that Walser was not necessarily aware of the meaning of his speech—indeed, that he probably was not. By allowing his audience to express their taboo wishes to be done with Holocaust remembrance, Karp writes, Walser convinced them that, “these taboos should never have existed.” Then he cuts to his bibliography.

Grand Hotel California Abyss

The abruptness of the ending of Aggression in the Life-World is difficult to interpret. At one level, Karp’s apparent lack of interest in the ethical and political implications of his case study reflects his stated goals and methods. From the beginning, he has set out to reveal that the social is constituted through acts of unconscious aggression, and that this aggression becomes legible in specific linguistic interactions, rather than to evaluate the effects of aggression itself. Reading Walser, Karp explicitly privileges form over content, treating the former as symptomatic of unstated meanings and effects. Granting the critic authority over the text he is analyzing, such an approach presumes the author under analysis to be ignorant, if not innocent, of what he really has at stake; it treats conscious attitudes and overt arguments as holding, at most, a secondary interest. At another level, the banal explanations for Karp’s tone and brevity may be the most plausible. He was writing in a non-native language; like many graduate students, he may have finished in haste.[9] In any case, his decision to eschew the kinds of judgments made by both his subject, Adorno, and his mentor, Brede is striking—all the more so because Karp is descended from German Jews and “grew up in a Jewish family” (Karp 2019a). This choice reflects a different mode of engagement with critical theory than scholars of either digital media or digitally mediated right-wing movements have observed.

Historians have shown that the Frankfurt School critiques of mass media helped shape the idea that digital media could constitute a more democratic alternative. Fred Turner has argued that the research Adorno conducted on the role of radio and cinema in shaping the authoritarian personality, as well as the proximity of Frankfurt School scholars to the Bauhaus and other practicing artists, generated a set of beliefs about the democratic character of interactivity (Turner 2013). Orit Halpern is more critical of the essentially liberal assumptions of media and technology critique in which she, too, places Adorno (2015, 18-19). However, like Turner, Halpern identifies the emergence of interactivity as a key epistemic shift away from the Frankfurt School paradigm that opposed “attention” and “distraction.” Cybernetics redefined the problem of “spectatorship” by transforming the spectator from an individual into a site of perceptions and cognitions—an “interface or infrastructure for information processing.” Where radio, cinema, and television had promoted conformity and passivity, cybernetic media promised to facilitate individual choice and free expression (2015, 224-6).

More recently, critics and scholars attempting to account for the phobic fascination that new right-wing movements show for “cultural marxism” have analyzed it in a variety of ways. The least sophisticated take at face value the claims of “alt-right” figures that they are only reacting to the ludicrous and pernicious excesses of their opponents.[10] More substantial interpretations have described the far right fixation on the Frankfurt School as a “dialectic of counter-Enlightenment” or form of “inverted appropriation.” Martin Jay (2011) and Andreas Huyssen (2017, 2019) both argue that the attraction of critical theory for the right lies in the dynamics of projection and disavowed recognition that it sets in motion. As Huyssen puts it, “wider circles of American white supremacists and their publications… have been drawn to critique and deconstruction because, on those traditions, they project their own destructive and nihilistic tendencies” (2017).

Aggression in the Life World does none of these things. Karp’s dissertation does not take up the critiques of mass media or the authoritarian personality that were canonized in the Anglo-American world at all, much less use them to develop democratic alternatives. Nor does it project its own penchant for destruction onto its subjects. In contrast with the “lunatic fringe” (Jay, 30) Karp does not carry out an “inverted appropriation” of critical theory, so much as a partial one. He adapts Frankfurt School concepts for technical purposes, making them more instrumentally useful to the disciplines of sociology or social psychology by abstracting them from their contexts. In the process, he also abandons the Frankfurt School commitment to emancipation. It is at this level of abstraction that his neo-Marxism—from which Marx and materialism have all but disappeared—can coexist with the nationalism that he and Thiel invoke to defend Palantir.

I asked at the beginning of this paper what beliefs Karp shares with Peter Thiel and what their common commitments might reveal about the self-consciously “contrarian” or “heterodox” network of actors that they inhabit. One answer that Aggression in the Life World makes evident is that both men regard the desire to commit violence as a constant, founding fact of human life. Both also believe that this drive expresses itself in social forms like language or group structure, even if speakers or group members remain unaware of their own motivations. These are ideas that Thiel attributes to the work of the eclectic French theorist René Girard, with whom he studied at Stanford, and whose theories of mimetic desire, scapegoating, and herd mentality he has often cited. In 2006 Thiel’s nonprofit foundation established an institute to promote the study of Girard and support the further development of mimetic theory; this organization, Imitatio, remains one of the foundation’s three major projects (Daub 2020, 97-112).

The text that Karp chose to analyze, as his case study, also shares a set of concerns with Thiel’s writings and statements against campus multiculturalism and political correctness; Walser’s speech became a touchstone of debates about historical memory in Germany, in which the newly imported Americanism politische Korrektheit circulated widely. In his dissertation, Karp does not celebrate Walser’s taboo speech in the same way that Thiel and his associates have sometimes celebrated violations of speech norms.[11] However, he does assert that jargon, and the unconscious aggression that it expresses, plays a role in the formation of all social groups, and refrains from evaluating whether Walser’s jargon was particularly problematic. Of course, the term “jargon” itself became a commonplace during the U. S. culture wars in the 1980s and 1990s, used to accuse academics and university administrators who purported to be speaking for vulnerable populations of in fact deploying obscure terms to aggrandize themselves. Thiel and his co-author David O. Sacks devote a chapter of The Diversity Myth to an account of how the vagueness of the word “multiculturalism” enabled activists and administrators at Stanford to use it in this manner (1995, 23-49). The idea that such terms express ressentiment and a will to power is consistent with the theoretical framework that Karp went on to develop.

Ironically, by attempting to expunge jargon of its subjective or impressionistic content, Karp renders it less materially objective. Rather than locating jargon in specific experiences of modernity, he transforms it into an expression of drives that, because they are timeless, are merely psychological. Karp makes a version of the eternalizing move that Adorno criticizes in Heidegger, in other words. Rather than elevating precarity into the essence of the human, Karp makes aggressive violence the substance of the social. In the process, he empties the concept of jargon of its critical power. When he arrives at the end of Walser’s speech, a speech that Karp characterizes as consolidating community based on unspeakable aggression, he can conclude only that it was effective.

A still greater irony in retrospect may be how, in Karp’s telling, Adorno’s jargon anticipates the software tools Palantir would develop. By tracing the rhetorical patterns that constitute jargon in literary language, Karp argues that he can reveal otherwise hidden identities and affinities—and the drive to commit violence that lies latent in them. By looking back to Adorno, he points toward a possible critique of big data analytics as a kind of authenticity jargon. That is, a way of generating and eternalizing false forms of selfhood. In data analysis, the role of the analyst is not to demystify and dispel reification. On the contrary, it is precisely to fix identity from its digital traces and to make predictions on the basis of the same. For Adorno, jargon is a form of language that seems to authenticate identity—but only seems to. The identities it makes available to the subject are based on an illusion that jargon sustains by suppressing the self-difference that historicity introduces into language. The illusion it offers is of timeless “human” experience. It covers for domination insofar as it makes the human condition—or rather, human conditions as they are at the time of speaking—appear unchangeable.

Big data analytics could be said to constitute an authenticity jargon in this sense: although they treat the data set under analysis as having something like an unconscious, they eliminate the temporal gaps and spaces of ambiguity that drive psychoanalytic interpretation. In place of interpretation, data analytics substitutes correlations that it treats simply as given. To a machine learning algorithm that has been trained on data sets that include zip codes and rates of defaulting on mortgage payments, for instance, it does not matter why mortgagees in a given zip code may have been more likely to default in the past. Nor will the algorithm that recommends rejecting a loan application necessarily explain that the zip code was the deciding factor. Like the existentialist’s illusion of immediate experience these procedures generate an aura of incontestable self-evidence.

As in Adorno, here, the loss of particular contexts can serve to conceal, and thus perpetuate, domination. Algorithms take the histories of oppression embedded in training data and project them into the future, via predictions that powerful institutions then act on. If the identities constituted in this way are false, the reifications they generate do real work, and can cause real harm. And yet, to read these figures historically is to recognize that they need not come true. This is not an interpretive path that Karp pursues. But for those of us concerned about the relationship between digital technologies and justice, this repressed insight of his dissertation is the most critical to follow.

_____

Moira Weigel is a Junior Fellow at the Harvard Society of Fellows and an editor and cofounder of Logic Magazine. She received her PhD from the combined program in Comparative Literature and Film and Media Studies at Yale University in 2017.

Back to the essay

_____

Notes

[1] Translations from German are mine unless otherwise noted.

[2] In 2017, when activists doxxed the founder of the neofascist blog the Right Stuff and the antisemitic podcasts Fash the Nation and The Daily Shoah, who went by the alias Mike Enoch, they revealed that he was in fact a programmer named Michael Peinovich (Marantz 2019, 275-9). Curtis Yarvin, who wrote a widely read blog advocating the end of democracy under the name Mencius Moldbug, also worked as a software engineer (Gray 2017). Several journalists have documented the interest that figures in or adjacent to the tech industry evince with Yarvin’s Neoreaction (NRx) or Dark Enlightenment (Gray 2017; Goldhill 2017). Prominent white nationalist media entrepreneurs also claim to have substantial followings in the tech industry. In 2017, Andrew Anglin told a Mother Jones reporter that Santa Clara County was the highest source of inbound traffic to his website, The Daily Stormer; Chuck Johnson said the same about his (now defunct) website Got News (Harkinson 2017). In response to an interview question about his “average” supporter, the white nationalist Richard Spencer claimed that, “many in the Alt-Right are tech savvy or actually tech professionals” (Hawley 2017, 78).

[3] James Damore, the engineer who wrote the July 2017 memo, “Google’s Ideological Echo Chamber,” and was subsequently fired, toured the right wing speaking circuit (Tiku 2019, 85-7). Brian Amerige, the Facebook engineer who identified himself to the New York Times in July 2018 as the creator of a conservative group on Facebook’s internal forum, Workplace, and then left the company, did the same (Conger and Frankel 2018). Shortly after, it was reported that Oculus cofounder Palmer Luckey’s departure from the company in 2017 had also been driven by conflicts with management over his support of Donald Trump (Grind and Hagey 2018); Luckey has since publicly claimed to speak on behalf of a silent majority of “tech conservatives” (Luckey 2018). Arne Wilberg, a long time recruiter of technical employees for Google and YouTube, filed a reverse discrimination suit in 2018, alleging that he had been fired for “opposing illegal hiring practices… systematically discriminating in favor of job applicants who are Hispanic, African American, or female, against Caucasian and Asian men” (Wilberg v. Google 2018). Most recently, in August 2019, The Wall Street Journal reported that the former Google engineer Kevin Cernekee had been fired in 2017 in retaliation for expressing “conservative” viewpoints on internal listservs (Copeland 2019). Former colleagues subsequently published screenshots showing that, among other things, Cernekee had proposed raising money for a bounty for finding the masked protestor who punched Richard Spencer at the Presidential inauguration in 2017 using WeSearchr, the now-defunct fundraising platform run by Holocaust “revisionist” Chuck C. Johnson. They also shared screenshots showing that Cernekee had defended two neo-Nazi organizations, The Traditionalist Workers Party and Golden State Skinheads, suggesting that they should “rename themselves to something normie-compatible like ‘The Helpful Neighborhood Bald Guys’ or the ‘Open Society Institute’” (Wacker 2019; Tiku 2019, 84). Like Damore, Amerige, and Wilberg, Cernekee received national media coverage.

[4] For instance, emails that BuzzFeed reporter Joe Bernstein obtained from Breitbart.com stated that Thiel invited Curtis Yarvin to watch the 2016 election results at his home in Hollywood Hills, where he had previously hosted Breitbart tech editor Milo Yiannopoulos; New Yorker writer Andrew Marantz reported running into Thiel at the “DeploraBall” that took place on the eve of Trump’s inauguration (2019, 47-9).

[5] Thiel supported Hawley’s campaign for Attorney General of Missouri in 2016 (Center for Responsive Politics); in that office, Hawley initiated an antitrust investigation of Google (Dave 2017) and a probe into Facebook exploitation of user data (Allen 2018). Thiel later donated to Hawley’s 2018 Senate campaign (Center for Responsive Politics); in the Senate, Hawley has sponsored multiple bills to regulate tech platforms (US Senate 2019a, 2019b, 2019c, 2019d, 2019e, 2019f, 2019g). These activities earned him praise from Trump at a White House Social Media Summit on the theme of liberal bias at tech companies, where Hawley also spoke (Trump 2019a).

[6] Pat Buchanan devoted a chapter to the subject, entitled “The Frankfurt School Comes to America,” in his 2001 Death of the West. Breitbart editor Michael Walsh published an entire book about critical theory, in which he described it as “the very essence of Satanism” (Walsh 2016, 50). Andrew Breitbart himself devoted a chapter to it in his memoir (Breitbart 2011, 113). Jordan Peterson more often rails against “postmodernism,” or “political correctness.” However, he too regularly refers to “Cultural Marxism”; at time of writing, an explainer video that he produced for the pro-Trump Epoch Times, has tallied nearly 750,000 views on YouTube (Peterson 2017).

[7] The memo that engineer James Damore circulated to his colleagues at Google presented a version of the Cultural Marxism conspiracy in its endnotes, as fact. “As it became clear that the working class of the liberal democracies wasn’t going to overthrow their ‘capitalist oppressors,’” Damore wrote, “the Marxist intellectuals transitioned from class warfare to gender and race politics” (Conger 2017). The group that Brian Amerige started on Facebook Workplace was called “Resisting Cultural Marxism” (Conger and Frankel 2018).

[8] The Stanford Review, which Thiel founded late in his sophomore year and edited throughout his junior and senior years at the university, devoted extensive attention to questions of speech on Stanford’s campus, which became a focal point of the US culture wars and drew international media attention when the academic senate voted to (slightly) revise its core curriculum in 1988 (see Hartman 2019, 227-30). In 1995, with fellow Stanford alumnus (and later PayPal Chief Operating Officer) David O. Sacks, Thiel published The Diversity Myth, a critique of the “debilitating” effects of “political correctness” on college campuses that, among other things, compared multicultural campus activists to “the bar scene from Star Wars” (xix). In 2018 he moved to Los Angeles, saying that political correctness in San Francisco had become unbearable (Peltz and Pierson 2018; Solon 2018) and in 2019 Founders Fund, the venture capital firm where he is a partner, announced that they would be sponsoring a conference to promote “thoughtcrime” (Founders Fund 2019).

[9] Aggression in the Life World is significantly shorter than either of the other two dissertations submitted to the sociology department at Frankfurt that year: Margaret Ann Griesese’s The Brazilian Women’s Movement Against Violence clocked in at 314 pages, and Konstantinos Tsapakidis, Collective Memory and Cultures of Resistance in Ancient Greek Music at 267; Karp’s is 129.

[10] Angela Nagle (2017) put forth an extreme version of this argument, arguing that the excesses of “social justice warrior” identity politics provoked the formation of the alt-right and that trolls like Milo Yiannopoulos were only replicating tactics of “transgression” that had been pioneered by leftist intellectuals like bell hooks and institutionalized on liberal campuses and in liberal media. Kakutani similarly argued that the Trumpist right was simply taking up tactics that the relativism of “postmodernism” had pioneered in the 1960s (2018, 18).

[11] In The Diversity Myth Sacks and Thiel describe on instance of resistance to the Stanford speech code, which was adopted in May 1990 and revoked in March 1995, as heroic. The incident took place on the night of January 19, 1992, when three members of the Alpha Epsilon Pi fraternity, Michael Ehrman, Keith Rabois, and Bret Scher, were walking home from a party through one of Stanford’s residential dormitories. Rabois, then a first year law student, began shouting slurs at the home of a resident tutor in the dormitory, who had been involved in the expulsion of Ehrman’s brother Ken from residential housing four years earlier, after Ken called the resident tutor assigned to him a “faggot.” “Faggot! Hope you die of AIDS!” Rabois shouted. “Can’t wait until you die, faggot.” He later confirmed and defended these statements in a letter to the Stanford Daily. “Admittedly, the comments made were not very articulate, nor very intellectual nor profound,” he wrote. “The intention was for the speech to be outrageous enough to provoke a thought of ‘Wow, if he can say that, I guess I can say a little more than I thought.” The speech code, which had not until that point been used to punish any student, was not used to punish Rabois; however, Thiel and Sacks describe the criticism of Rabois from administrators and fellow students that followed as a “witch hunt” (1995, 162-75). Rabois subsequently transferred to Harvard but later worked with Thiel at PayPal and later as a partner at Founders Fund. More recently, the blog post that Founders Fund published to announce the Hereticon conference cited in Footnote 8, described violating taboos on speech as its goal: “Imagine a conference for people banned from other conferences. Imagine a safe space for people who don’t feel safe in safe spaces. Over three nights we’ll feature many of our culture’s most important troublemakers in the fields of knowledge necessary to the progressive improvement of our civilization” (2019).

_____

Works Cited

- Adorno, Theodor. 1973. The Jargon of Authenticity. Translated by Knut Tarnowski and Frederic Will. London and New York: Routledge.

- Ahmed, Maha. 2018. “Aided by Palantir, the LAPD Uses Predictive Policing to Monitor Specific People and Neighborhoods.” The Intercept (May 11).

- Alden, William. 2017. “There’s a Fight Brewing Between the NYPD and Silicon Valley’s Palantir.” Buzzfeed (Jun 28).

- Allen, Jonathan and Erik Ortiz. 2018. “Missouri Attorney General open probe into Facebook data collection.” NBC News (April 2).

- Barbrook, Richard and Andy Cameron. 1996. “The Californian Ideology.” Science as Culture 6:1. 44-72.

- Berkowitz, Bill. 2003. “‘Cultural Marxism’ Catching On.” Intelligence Report. Southern Poverty Law Center (Aug 15).

- Bareuther, Herbert, Hans-Joachim Busch, Dieter Ohlmeier and Tomas Plänkers. eds. 1989. Forschen und Heilen: auf dem Weg zu einer psychoanalytischen Hochschule. Frankfurt am Main: Suhrkamp.

- Bernstein, Joe. 2017. “Alt-White: How the Breitbart Machine Laundered Racist Hate.” Buzzfeed (October 5).

- Brede, Karola. 1972. Sozioanalyse psychosomatischer Störungen.Zum Verhältnis von Soziologie und Psychosomatischer Medizin. Frankfurt am Main: Athenäum.

- Brede, Karola. 1976. “Der Trieb als humanspezifische Kategorie: Alfred Lorenzers problematischer Beitrag zum Verhältnis von Interaktion und Trieb.” Psyche 30:6. 473-502.

- Brede, Karola. 1986. Individuum und Arbeit: Ebenen ihrer Vergesellschaftung.Frankfurt am Main: Campus Verlag.

- Brede, Karola. 1995. “‘Neuer’ Autoritarismus und Rechtsextremismus: Eine zeitdiagnostische Mutmaßung.” Psyche49:11. 1019-1042.

- Brede, Karola. 1995. Wagnisse der Anpassung im Arbeitsalltag. Opladen: Westdeutscher Verlag.

- Brede, Karola. “Die psychoanalytische Zeitdiagnose und das Geschichtsbewußtsein der Deutschen.” Psyche 51:9-10. 875-904.

- Brede, Karola. 2000. “Die Walser-Bubis-Debatte: Aggression als Element öffentlicher Auseinandersetzung.” Psyche54:3. 203-233

- Brede, Karola and Alexander Karp. 1997. “Eliminatorischer Antisemitismus: Wie ist die These zu halten?” Psyche 51:6. 606-628.

- Brede, Karola and Margarete Mitscherlich-Nielsen. 1996. “Das Sigmund-Freud-Institut unter Alexander Mitscherlich—ein Gespräch.” In Psychoanalyse in Frankfurt am Main: Zerstörte Anfänge, Wiederannäherung, Entwicklungen. Edited by Tomas Plänkers, Michael Laier, Hans-Heinrich Otto, Hans-Joachim Rothe and Helmut Siefert. Tübingen: edition diskord.

- Breitbart, Andrew. 2011. Righteous Indignation: Excuse Me While I Save the World! New York: Grand Central Publishing.

- Broockman, David E., Gregory Ferenstein, and Neil Malhotra. 2019. “Predispositions and the Political Behavior of American Economic Elites: Evidence from Technology Entrepreneurs.” American Journal of Political Science, 63:1 (Jan). 212-233.

- Buchanan, Pat. 2001. Death of the West: How Dying Populations and Immigrant Invasions Imperil Our Culture and Civilization. New York: St. Martin’s Press.

- Buhr, Sarah. 2017. “Tech Employees Protest in Front of Palantir HQ.” Tech Crunch (Jan 18).

- “Donor Lookup: Peter Thiel.” Center for Responsive Politics. Accessed May 7 2020.

- Chafkin, Max. 2019. “The Complicated Politics of Palantir’s CEO.” Bloomberg (Aug 22).

- Chun, Wendy Hui Kyong. 2005. Control and Freedom: Power and Paranoia in the Age of Fiber Optics Cambridge, Mass: MIT Press.

- Chun, Wendy Hui Kyong. 2011. Programmed Visions: Software and Memory. Cambridge, Mass: MIT Press.

- Chun, Wendy Hui Kyong. 2016. Updating to Remain the Same; Habitual New Media. Cambridge, Mass.: MIT Press.

- Conger, Kate. 2017. “Here’s the Full 10 Page Anti-Diversity Screed Circulating Internally at Google.” Gizmodo (Aug 5).

- Conger, Kate and Sheera Frankel. 2018. “Dozens at Facebook Unite to Challenge Its ‘Intolerant’ Liberal Culture.” The New York Times (Aug 28).

- Copeland, Rob. “Fired By Google, a Republican Engineer Hits Back.” The Wall Street Journal (Aug 1).

- Daub, Adrian. 2020. What Tech Calls Thinking: The Intellectual Bedrock of Silicon Valley. New York: Farrar Strauss and Giroux Originals x Logic Books.

- Dave, Paresh. 2017. “Google Faces Antitrust Investigation in Missouri.” Reuters (Nov 13).

- Federal Election Commission. 2018. Trump Victory Committee Campaign Finance Data.

- Founders Fund. 2019. “Hereticon.” Medium (Oct 1).

- Goldhill, Olivia. 2017. “The Neo-Fascist Philosophy That Underpins Both the Alt-Right and Silicon Valley Technophiles.” Quartz (Jun 18).

- Granato, Andrew. 2017. “How Peter Thiel and the Stanford Review Built a Silicon Valley Empire.” Stanford Politics Magazine (Nov 27).

- Gray, Rosie. 2017. “Behind the Internet’s Anti-Democracy Movement.” The Atlantic (Feb 10).

- Green, Joshua. 2017. Devil’s Bargain: Steve Bannon, Donald Trump, and the Nationalist Uprising. New York: Penguin Books.

- Greenberg, Andy. 2013. “How a ‘Deviant’ Philosopher Built Palantir, a CIA-Funded, Data-Mining Juggernaut.” Forbes (Sep 2).

- Grind, Kirsten and Keach Hagey. 2018. “Why Did Facebook Fire a Top Executive? Hint: It Had Something to Do With Trump.” The Wall Street Journal (Nov 11).

- Habermas, Jürgen. 1972. Knowledge and Human Interests. Translated by Jeremy J. Shapiro. New York: Beacon Press.

- Halpern, Orit. 2015. Beautiful Data: A History of Vision and Reason Since 1945. Durham, NC: Duke University Press.

- Harkinson, Josh. 2017. “Meet Silicon Valley’s Secretive Alt-Right Followers.” Mother Jones (March 10).

- Hartman, Andrew. 2019. A War for the Soul of America: A History of the Culture Wars, Second Edition. Chicago and London: University of Chicago Press.

- Harris, Mark. 2018. “If You Drive in Los Angeles, the Cops Can Track Your Every Move.” Wired (Nov 13).

- Hatmaker, Taylor. 2019. “Palantir Wins $800 Million Contract to Build the US Army’s Next Battlefield Software System,” TechCrunch (Mar 27).

- Hawley, George. 2017. Making Sense of the Alt-Right. New York: Columbia University Press.

- Hayes, Dennis. 1989. Behind the Silicon Curtain: The Seductions of Work in a Lonely Era. Boston: South End Press.

- Herzog, Dagmar. 2016. Cold War Freud: Psychoanalysis in an Age of Catastrophes. Princeton, NJ: Princeton University Press.

- Honneth, Axel. 1991. The Critique of Power: Reflective Stages in Critical Social Theory. Translated by Kenneth Bayes. Cambridge, Mass.: MIT Press.

- Huyssen, Andreas. 2017. “Breitbart, Bannon, Trump, and the Frankfurt School: A Strange Meeting of Minds.” Public Seminar (Sep 28).

- Huyssen, Andreas. 2019. “Behemoth Rises Again.” n+1 (Jul 29).

- Jay, Martin. 2011. “Dialectic of Counter-Enlightenment: The Frankfurt School as Scapegoat of the Lunatic Fringe.” Salmagundi 168/169. 30-40.

- Judson, Jen. 2019. “Palantir—Who Successfully Sued the Army—Has Won a Major Army Contract.” Defense News (Mar 29).

- Kakutani, Michiko. 2018. The Death of Truth: Notes on Falsehood in the Age of Trump. New York: Tim Duggan Books.

- Kantrowitz, Alex. 2019. “Silicon Valley’s Right Wing is Angry and Punching Back.” Buzzfeed (Jul 15).

- Karp, Alexander C. 1999. “Der Aggressionsbegriff in der Soziologie von Talcott Parsons.” Plädoyer für die Triebslehre: Gegen die Verarmung sozialwissenschaftlichen Denkens, Sigmund-Freud-Institut, Psychoanalytische Beiträge, 2. Tübingen: edition diskord. 7-9.

- Karp, Alexander C. 2002. Aggression in der Lebenswelt: Die Erweiterung des Parsonsschen Konzepts der Aggression durch die Beschreibung des Zusammenhangs von Jargon, Aggression und Kultur. PhD diss., J. W. Goethe University.

- Karp, Alexander C. 2019a. Interview with Matthias Doepfner (Part 2). pod, der Podcast von Axel Springer. Podcast Audio (Jan 23).

- Karp, Alexander C. 2019b. Interview at Davos. CNBC (Jan 23).

- Lewis, David. 2017. “We Snuck Into Seattle’s Super-Secret White Nationalist Convention.” The Stranger (Oct 4).

- Lewis, Rebecca. 2018. “Alternative Influence: Broadcasting the Reactionary Right on YouTube.” New York: Data & Society.

- Luckey, Palmer. 2018. “On Google Pulling Out of the Military’s AI Project Maven.” The Wall Street Journal (Nov 12).

- Mac, Ryan. 2017. “The Trump Administration Has Not Asked Palantir Technologies to Build a Muslim Registry.” Forbes (Jan 12).

- MacMillan, Douglas and Elizabeth Dwoskin. 2019. “The War Inside Palantir: Data-Mining Firm’s Ties to ICE Under Attack By Employees.” Washington Post (Aug 22).

- Marantz, Andrew. 2019. Antisocial: Online Extremists, Techno-Utopians, and the Hijacking of the American Conversation. New York: Viking Press.

- McPherson, Tara. 2000. “I’ll Take My Stand in Dixie-Net: White Guys, the South, and Cyberspace.” In Race in Cyberspace. Edited by Beth Kolko and Lisa Nakamura. London/New York: Routledge. 117-131.

- Metcalf, Jacob. 2016. “Getting Rigorously Naive, Or Why Tech Needs Philosophy.” Ethical Resolve (Feb 24).

- 2019. “The War Against Immigrants: Trump’s Tech Tools Powered by Palantir.” Mijente.net (Aug).

- Mitscherlich, Alexander. 1967. Unfähigkeit zu trauern: Grundlagen kollektiven Verhaltens. München: Piper Verlag.

- Moyn, Samuel. 2018. “The Alt-Right’s Favorite Meme Is 100 Years Old.” The New York Times (Nov 13).

- Müller-Doohm, Stefan. 2016. Habermas: A Biography. Malden, Mass.: Polity Press.

- Neiwert, David. 2017. Alt-America: The Rise of the Radical Right in the Age of Trump. London/New York: Verso Books.

- Nagle, Angela, 2017. Kill All Normies: Online Culture Wars From 4chan and Tumblr to Trump and the Alt-Right. Alresford, Hants, UK: Zer0 Books.

- Nakamura, Lisa. 2007. Digitizing Race. Minneapolis: University of Minnesota Press.

- Peterson, Jordan B. 2017. “Postmodernism and Cultural Marxism.” Interview with The Epoch Times (Jul 6).

- James F., and David Pierson. 2018. “Peter Thiel, Retreating from Silicon Valley’s Tech Scene, Is Moving to LA.” Los Angeles Times (Feb 15).

- Saxenian, AnnaLee. 1994. Regional Advantage: Culture and Competition in Silicon Valley and Route 128. Cambridge, Mass: Harvard University Press.

- Sacks, David O. and Peter Thiel. 1995. The Diversity Myth: Multiculturalism and Political Intolerance on Campus. Oakland, CA: The Independent Institute.

- The Silicon Review. 2018. “Alexander Karp: From Eccentric Philosopher to Crime Fighting Pioneer.” The Silicon Review (Oct 1).

- Solon, Olivia. 2018. “As Peter Thiel Ditches Silicon Valley for LA, Locals Tout ‘Conservative Renaissance.’” The Guardian (Feb 16).

- Stern, Alexandra Minna. 2019. Proud Boys and the White Ethnostate: How the Alt-Right is Warping the American Imagination. New York: Beacon Press.

- Streit, David. 2016. “‘I’m Here to Help,’ Trump Tells Tech Executives at Meeting.” The New York Times (Dec 14).

- Thiel, Peter. 2019a. “The Star Trek Computer Is Not Enough.” National Conservatism Conference (Jul 14).

- Thiel, Peter. 2019b. “Good for Google, Bad for America.” The New York Times (Aug 1).

- Tiku, Nitasha. 2018. “The Dirty War Over Diversity Inside Google.” Wired (Jan 26).

- Tiku, Nitasha. 2019. “Three Years of Misery Inside Google, the Happiest Company in Tech.” Wired (Sep 13).

- Trump, Donald. 2019a. “Remarks.” Presidential Social Media Summit (Jul 11).

- Trump, Donald. 2019b. “… are NOT planning to illegally subvert the 2020 Election.” Twitter (Aug 6).

- Turner, Fred. 2006. Counterculture to Cyberculture: Stewart Brand, the Whole Earth Catalog, and the Rise of Digital Utopianism. Chicago: University of Chicago Press.

- Turner, Fred. 2013. The Democratic Surround: Multimedia and American Liberalism from World War II to the Psychedelic Sixties. Chicago: University of Chicago Press.

- US Senate. 2019a. “2889 – National Security and Personal Data Protection Act of 2019.” Bill introduced Nov 18.

- US Senate. 2019b. “2314 – SMART Act.” Bill introduced Jul 30.

- US Senate. 2019c. “1916 – Protecting Children from Online Predators Act of 2019.” Bill introduced Jun 20.

- US Senate. 2019d. “1914 – Ending Support for Internet Censorship Act.” Bill introduced Jun 19.

- US Senate. 20193. “1629 – A bill to regulate certain pay-to-win microtransactions and sales of loot boxes in interactive digital entertainment products, and for other purposes.” Bill introduced May 23.

- US Senate. 2019f. “1578 – Do Not Track Act.” Bill introduced May 21.

- US Senate. 2019g. “748 – A bill to amend the Children’s Online Privacy Protection Act of 1998 to strengthen protections relating to the online collection, use, and disclosure of personal information of children and minors, and for other purposes.” Bill introduced Mar 12.

- Wacker, Mike. 2019. “The Other Side of Kevin Cernekee.” Medium (Aug 6).

- Waldman Peter, Lizette Chapman, and Jordan Robertson. 2018. “Palantir Knows Everything About You.” Bloomberg Business Week (Apr 19).

- Walser, Martin. 2015. Unser Auschwitz: Auseinandersetzung mit der deutschen Schuld. Edited by Andreas Meier. Berlin: Rowohlt.

- Walser, Martin, and Ignaz Bubis. 1999. Die Walser Bubis Debatte. Edited by Frank Schirrmacher. Frankfurt am Main: Suhrkamp Verlag.

- Walsh, Michael. 2016. The Devil’s Pleasure Palace: The Cult of Critical Theory and the Subversion of the West. New York: Encounter Books.

- Wiener, Anna. 2017. “Why Protestors Gathered Outside Peter Thiel’s Mansion.” The New Yorker (Mar 14).

- Winston, Ali. 2018. “Palantir Has Secretly Been Using New Orleans to Test its Predictive Policing Technology.” The Verge (Feb 27).

- Woodman, Spencer. 2017. “Palantir Provides the Engine for Trump’s Deportation Machine.” The Intercept (Mar 2).